Training your own AI model used to require a team of ML engineers. Mistral just changed that. Mistral Forge, the French AI company’s new platform for training custom AI models on your own data, launched in March 2026 — and it’s designed to make fine-tuning accessible to any organization with data and a use case.

Here’s a full breakdown of what Mistral Forge is, how it works, who should use it, and how it stacks up against the competition.

What Is Mistral Forge?

Mistral Forge is a managed platform that lets you fine-tune Mistral’s base models on your own proprietary data to create a custom AI model tailored to your specific domain, style, or task. You bring the data; Mistral provides the infrastructure, the training pipeline, and the resulting model — hosted either on Mistral’s cloud or deployable on your own infrastructure.

The platform is designed to be accessible to non-ML teams. You don’t need to write training code, configure GPU clusters, or manage distributed training infrastructure. You upload structured training data in a standard format and Forge handles the rest. The interface walks you through dataset validation, training configuration, evaluation, and deployment in a guided workflow.

Forge is built on top of Mistral’s most capable base models — currently Mistral Large 2 and Mistral Medium — and uses supervised fine-tuning (SFT) with optional reinforcement learning from human feedback (RLHF) for teams that want a higher-quality training signal.

Why Fine-Tuning Matters for Business

General-purpose AI models are impressively capable, but they have real limitations for specialized enterprise use cases. A base model trained on internet-scale data doesn’t know your internal terminology, your product catalog, your compliance requirements, or your company’s communication style. Prompt engineering can compensate for some of this, but it has limits — especially as use cases become more specific and demanding.

Fine-tuning solves this at the model level. A model fine-tuned on your support tickets will handle customer queries better than a general model given a detailed system prompt. A model fine-tuned on your legal documents will draft contracts that match your house style. A model fine-tuned on your product documentation will answer technical questions with domain-specific accuracy that no amount of prompting can replicate.

How the Training Process Works on Forge

The Forge training workflow is straightforward. You start by preparing your dataset — pairs of inputs and desired outputs that represent the behavior you want the model to learn. Forge provides documentation and validation tools to help you format data correctly and identify quality issues before training begins.

Once your dataset is uploaded, you configure training parameters. For most users, the default configuration works well — Forge has sensible defaults tuned for common use cases. Advanced users can adjust learning rates, training epochs, and regularization settings. The platform estimates training time and cost before you commit, so there are no surprises. Training runs on Mistral’s infrastructure and takes anywhere from a few hours to a couple of days depending on dataset size.

When training is complete, Forge automatically evaluates your custom model against a held-out validation set and compares its performance to the base model. You get a clear picture of how much improvement fine-tuning achieved and where the model still has gaps.

Deployment Options

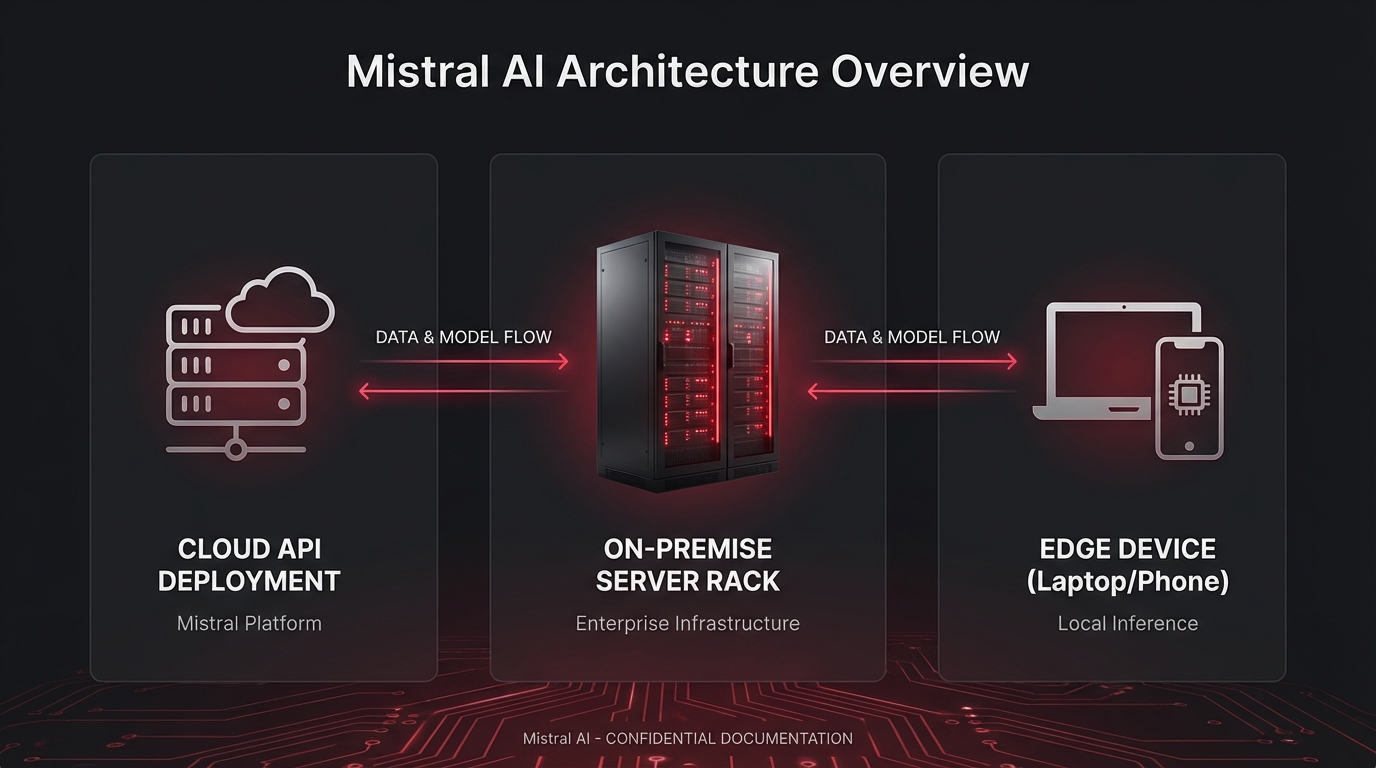

Mistral Forge offers flexible deployment. Your custom model can be hosted on Mistral’s La Plateforme — their managed inference infrastructure — with API access that mirrors the standard Mistral API. This is the fastest path to production and requires no infrastructure management on your part.

For teams with data residency requirements or on-premise infrastructure needs, Forge also supports model export — you can download your custom model weights and deploy them on your own hardware or in your own cloud environment. This is critical for regulated industries where data and model sovereignty are non-negotiable.

How Forge Compares to Alternatives

OpenAI’s fine-tuning API is the most established option in the market, supporting GPT-4o and GPT-3.5-turbo. It’s well-documented and has strong tooling, but it locks you into OpenAI’s infrastructure with no model export option. Google’s Vertex AI fine-tuning supports Gemini models and integrates with the broader Google Cloud ecosystem — powerful, but complex to set up for teams without Google Cloud expertise.

Mistral Forge’s key differentiators are its model export capability, its European data residency options — important for GDPR-compliant organizations — and its competitive pricing. For European enterprises in particular, Forge may be the most compliance-friendly fine-tuning option available.

Final Thoughts

Mistral Forge lowers the barrier to custom AI significantly. If your organization has domain-specific data and a use case where general models underperform, Forge is worth serious evaluation. The combination of a guided training workflow, flexible deployment, and model export makes it one of the most practical fine-tuning platforms available in 2026. Follow PickGearLab for hands-on testing as we put Forge through its paces.

Leave a Reply