When Google DeepMind released Gemma 4 on April 2, 2026, it didn’t just drop another open-weight model into an already crowded field — it redrew the competitive map entirely. With a fully permissive Apache 2.0 license, native multimodal capabilities across text, image, video, and audio, and a 31-billion-parameter flagship that currently ranks as the #3 open model in the world on the Arena AI leaderboard, Gemma 4 is the most ambitious open-source AI release of the year so far. For developers, researchers, and enterprises that have been waiting for an open model good enough to replace proprietary alternatives, that wait may finally be over.

What Makes Gemma 4 Different: Apache 2.0 and Multimodal-First Design

Past generations of the Gemma family shipped under Google’s custom “Gemma Terms of Use” — a license that imposed restrictions on commercial use, redistribution, and modification that made many enterprise teams hesitant to build on them. Gemma 4 changes everything by adopting the Apache 2.0 license, the gold standard of open-source permissiveness. You can now modify, fine-tune, redistribute, and commercialize Gemma 4 models without jumping through legal hoops or seeking special approval. That single licensing change alone is arguably more significant than any technical improvement.

On the technical side, Gemma 4 is built multimodal from the ground up — not retrofitted. All four model variants natively process images, video (with and without audio), and text simultaneously. Variable-resolution image handling means the model doesn’t force every input into a fixed pixel grid; instead it intelligently adapts to the actual dimensions of the image. The vision encoder uses learned 2D positional embeddings, and the audio subsystem is based on a USM-style conformer architecture. This is not a text model with vision bolted on — it’s a genuinely multimodal architecture designed to handle real-world inputs from day one.

The four models span a wide capability-to-resource spectrum: the Effective 2B (E2B) with 2.3 billion active parameters and the Effective 4B (E4B) with 4.5 billion active parameters target on-device and edge deployment with 128K context windows. The 26B Mixture-of-Experts model activates only 4 billion parameters per forward pass despite its 26 billion total, making it extraordinarily efficient. The flagship 31B dense model tops out at a 256K context window and targets server-side deployments where raw performance matters most. With 140+ languages natively supported across the family, Gemma 4 is truly global out of the box.

The Architecture Innovations That Power Gemma 4’s Efficiency

Google DeepMind didn’t just scale Gemma 4 up — they engineered it smarter. Two architectural innovations in particular stand out: Per-Layer Embeddings (PLE) and Shared KV Cache. Together, they explain how Gemma 4’s smaller models punch so far above their weight.

Per-Layer Embeddings (PLE) adds a parallel, lower-dimensional conditioning pathway alongside the main residual stream. Traditional transformers front-load all token-specific information into a single embedding at the input layer. PLE instead injects per-token context at each layer of the network independently. The result is especially pronounced in smaller models like the E2B and E4B, where it enables a level of nuanced, context-aware processing that would otherwise require a far larger model.

Shared KV Cache takes a different angle on efficiency. In standard attention mechanisms, every layer maintains its own key-value cache — a memory-intensive requirement that grows linearly with model depth. Gemma 4’s shared KV cache approach reuses key-value states from earlier layers in the final N layers of the network. This dramatically reduces both memory footprint and compute overhead during inference with minimal impact on model quality. For edge deployment and on-device scenarios, this is a game-changer.

The model also uses alternating local sliding-window attention (covering 512 to 1,024 tokens) and global full-context attention layers. This hybrid attention approach means Gemma 4 doesn’t waste compute on full global attention for every single layer — it reserves the expensive full-context passes for the layers where they matter most, while using efficient local attention elsewhere. The dual RoPE configuration (standard and proportional) rounds out an architecture that has clearly been tuned with real deployment constraints in mind, not just benchmark maximization.

Benchmark Performance: How Gemma 4 Stacks Up Against the Competition

Numbers matter, and Gemma 4’s benchmark performance is genuinely impressive — particularly at the 31B scale. On MMLU Pro, the 31B model scores 85.2%, placing it among the elite tier of models regardless of open or closed status. Its GPQA Diamond score of 84.3% reflects deep scientific reasoning ability, and its LiveCodeBench score of 80.0% puts it in the conversation for top-tier coding assistants. On multimodal benchmarks, MMMU Pro Vision comes in at 76.9% — strong performance that validates the native multimodal architecture.

Perhaps most telling is the Arena AI leaderboard score of approximately 1,452 for the 31B model, landing it at #3 among all open models globally. The 26B MoE model scores 1,441 despite activating only 4 billion parameters per token — which means you’re getting near-flagship intelligence at a fraction of the compute cost. For the E2B model, MMLU Pro sits at 60.0%, which is remarkable for a model that can run on a smartphone. Context matters: a score of 60% on MMLU Pro from a 2.3-billion-parameter model represents frontier efficiency.

To put this in competitive context: just eighteen months ago, a 31-billion-parameter open model of this quality simply didn’t exist at any price. Today it’s free, commercially licensed, and available on Hugging Face with multiple deployment pathways. The gap between open-weight and closed proprietary models — which was once a chasm — has narrowed to a crack.

Deployment Flexibility: From Smartphone to Enterprise Cloud

One of Gemma 4’s most compelling qualities is how many deployment pathways it supports out of the box. For developers, the Hugging Face Transformers pipeline integration is as simple as a few lines of Python. For on-device deployment on Apple Silicon, MLX support is built in and optimized. For quantized inference anywhere, llama.cpp compatibility means Gemma 4 can run on hardware as modest as a consumer laptop with a GGUF quantized weight file. There’s even a WebGPU demo that runs the E2B model entirely in the browser.

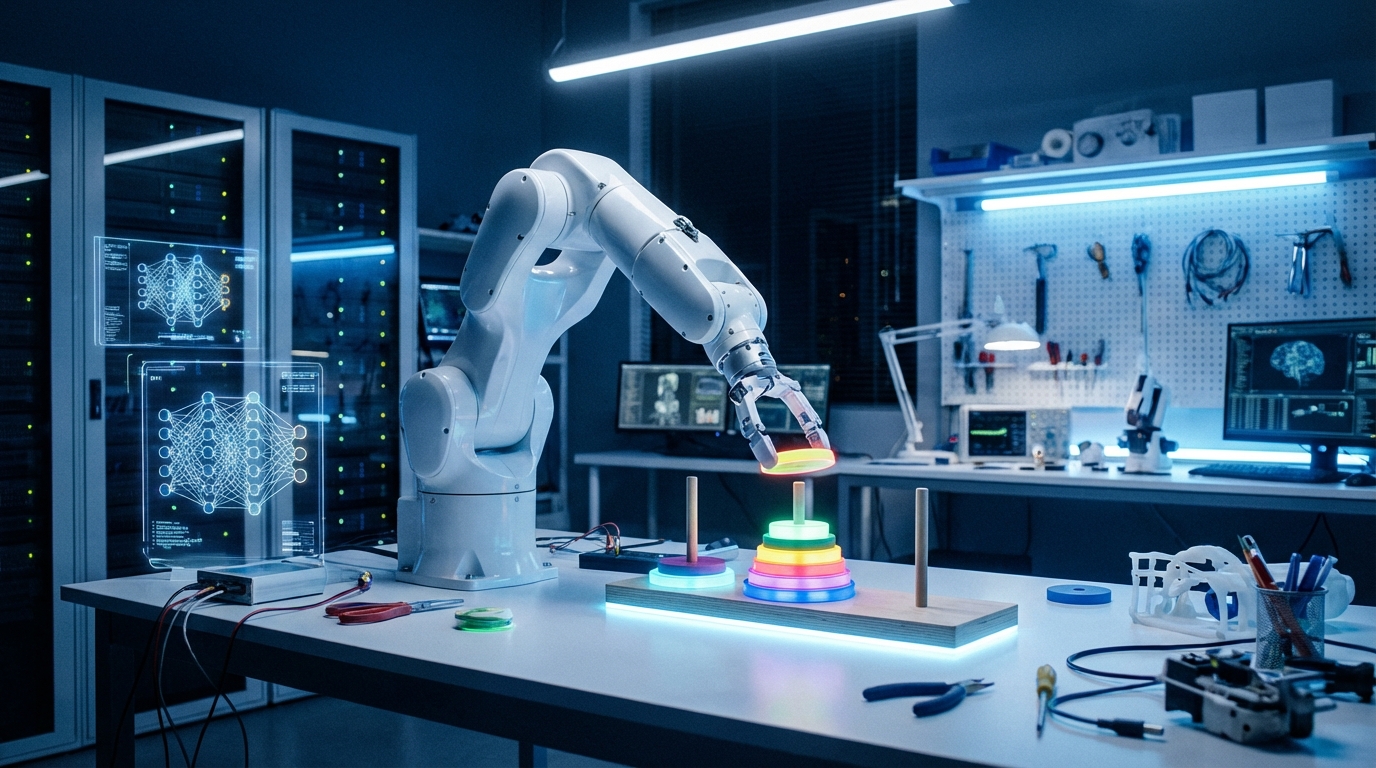

On the enterprise end, Google Cloud’s Vertex AI platform supports Gemma 4 fine-tuning with custom Docker containers and supervised fine-tuning (SFT) pipelines. The TRL (Transformers Reinforcement Learning) library has been updated with full multimodal support for Gemma 4, including multimodal tool responses in agentic training workflows. An example training script using the CARLA driving simulator demonstrates fine-tuning the E2B model on visual navigation tasks — a glimpse of the agentic robotics use cases now within reach of any team with a GPU cluster.

For developers who don’t want to manage infrastructure at all, Unsloth Studio provides a one-command installation and browser-based fine-tuning environment. The breadth of these options reflects a deliberate strategy: Google DeepMind wants Gemma 4 to be deployable by a solo developer on a MacBook and by a Fortune 500 enterprise on a Vertex AI cluster alike. Since the original Gemma 1 launch, developers have downloaded Gemma models over 400 million times total, building a community of over 100,000 variants. Gemma 4’s Apache 2.0 license should accelerate that trajectory dramatically.

Agentic Capabilities: Built for the Age of AI Workflows

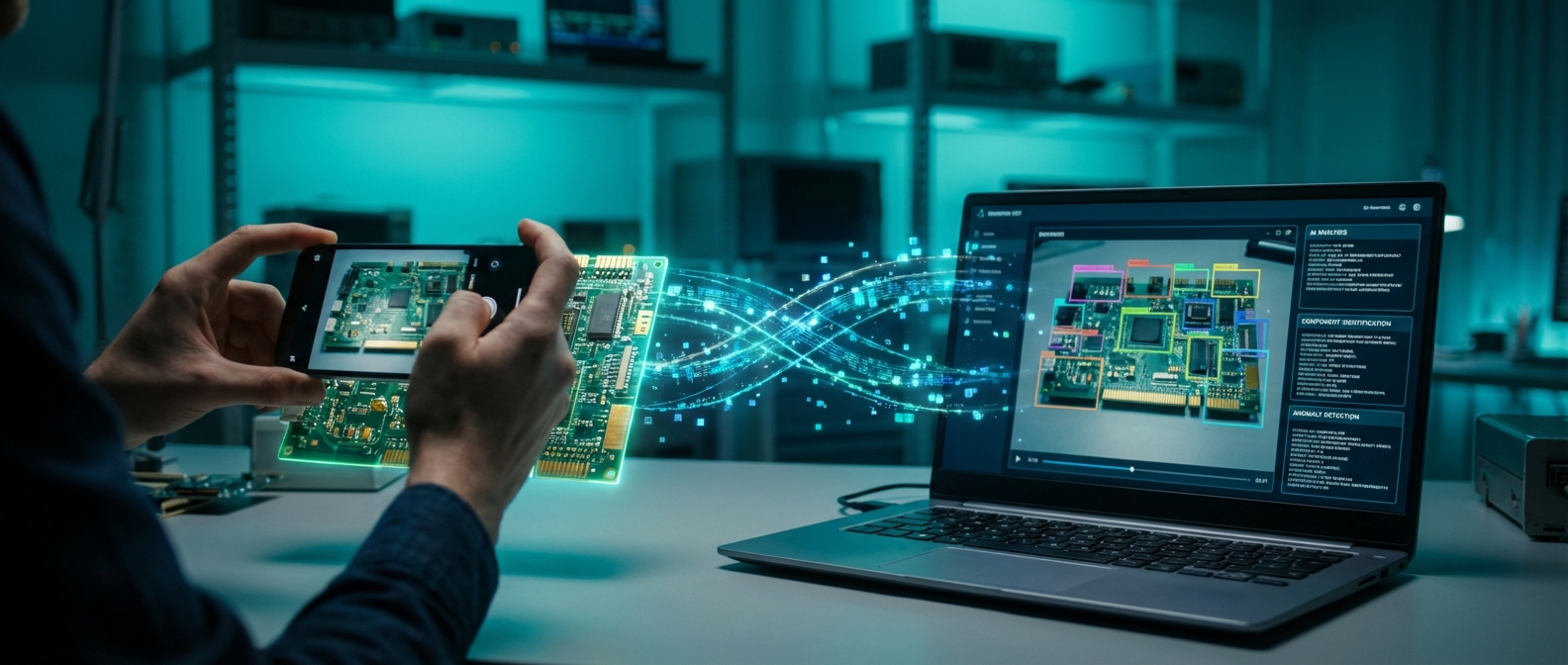

Beyond raw Q&A performance, Gemma 4 has been purpose-built for agentic applications — autonomous AI systems that take sequences of actions to complete complex tasks. The model includes native support for multimodal function calling, enabling tool use with visual context. This means an agent built on Gemma 4 can see a screenshot, identify a UI element, call a function to interact with it, observe the result visually, and continue iterating — all without leaving the model’s native capability set.

GUI detection and web element localization are first-class capabilities, with the model outputting native JSON bounding boxes for detected elements. Object detection and pointing work similarly. These are exactly the capabilities needed to build robust computer-use agents — the class of AI system that can operate desktop software, fill out forms, navigate websites, and complete multi-step workflows on behalf of users. With MCP (Model Context Protocol) now having crossed 97 million installs as the standard for connecting AI agents to external tools, a Gemma 4 agent can theoretically plug into thousands of pre-built integrations from day one.

Video understanding, both with and without audio, opens up another category of agentic applications: monitoring systems, video summarization, visual QA for surveillance or manufacturing, and real-time video analysis for autonomous vehicles. The CARLA driving simulator fine-tuning example in the TRL documentation isn’t an accident — Google is explicitly positioning Gemma 4 as the foundation model for robotics and embodied AI research.

What This Means for the Open-Source AI Ecosystem

Gemma 4’s release isn’t just a product announcement — it’s a statement about the future of AI development. When a model with GPQA Diamond performance of 84.3% and Arena AI scores in the top three globally ships under Apache 2.0, it fundamentally changes the calculus for every organization deciding whether to build on proprietary APIs or open infrastructure.

For startups, the licensing change alone is transformative. You can now build a commercially viable product on Gemma 4 without negotiating enterprise API contracts or worrying about rate limits and pricing changes from a third-party provider. For researchers, the full weights and training methodology transparency enable experiments that closed models simply can’t support. For enterprises in regulated industries — healthcare, finance, legal — on-premise deployment of a frontier-class open model satisfies data governance requirements that cloud API calls cannot.

The Gemma ecosystem’s 100,000+ community variants also mean that fine-tuned versions for specific domains — medical coding, legal document review, materials science, financial modeling — are likely to proliferate rapidly. Apache 2.0 means those fine-tuned variants can be commercialized without restriction, creating an entire secondary market of specialized Gemma 4 derivatives.

Conclusion: The Open Model Era Has Arrived

Google’s Gemma 4 represents the moment open-source AI stopped being a compromise and started being a genuine first choice. With four model sizes spanning smartphone to server, native multimodal capabilities across all input types, a fully permissive Apache 2.0 license, and benchmark performance that rivals models costing tens of millions of dollars to train and deploy, Gemma 4 is the open-weight model the industry has been building toward for years.

Whether you’re a solo developer building a multimodal chatbot, a research team exploring agentic AI workflows, or an enterprise looking to bring AI on-premise without vendor lock-in, Gemma 4 deserves a serious look. The 31B model is available now on Hugging Face and Google Cloud. The E2B runs in your browser. The future of open AI is here — and it’s licensed for everyone to use.

Leave a Reply