In March 2026, a quiet infrastructure milestone sent ripples through the AI industry that most mainstream headlines failed to capture. Anthropic’s Model Context Protocol — known simply as MCP — crossed 97 million installs, making it the fastest-adopted AI infrastructure standard in history. For context, Kubernetes, which now underpins much of the world’s cloud infrastructure, took nearly four years to reach comparable deployment density. MCP did it in sixteen months. What began as an internal Anthropic experiment has become the connective tissue of the agentic AI era, and this week Anthropic formalized that status by donating the protocol to the Linux Foundation’s newly established Agentic AI Foundation. The plumbing of AI agents just became a public good.

What Is MCP and Why Does It Matter?

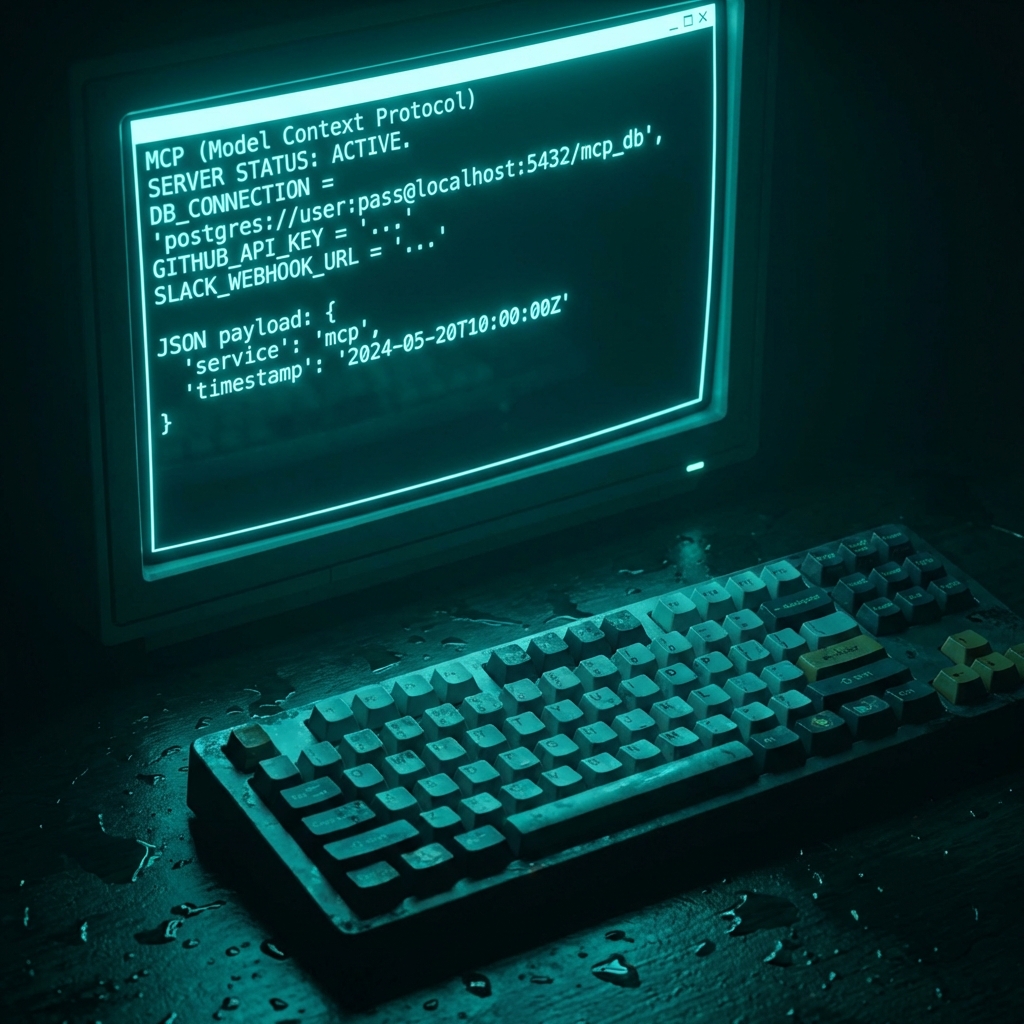

The Model Context Protocol is a standardized open protocol that enables AI agents to communicate with external tools, data sources, and services in a consistent, predictable way. Before MCP, every AI application that needed to connect to a database, call a third-party API, or read a file had to build its own custom integration layer. Enterprises maintaining dozens of AI workflows found themselves managing hundreds of bespoke connectors — brittle, expensive, and difficult to audit.

MCP solves this by establishing a universal specification — essentially the HTTP of agentic AI. Just as HTTP standardized how web browsers communicate with servers, MCP standardizes how AI agents communicate with external capability providers called “MCP servers.” Any tool, database, or service that publishes an MCP server can instantly be connected to any MCP-compatible AI system. The architecture is clean: Hosts (AI applications) connect through Clients (protocol handlers) to Servers (capability exposers like GitHub, Postgres, Slack, and hundreds more). The result is a plug-and-play ecosystem for AI agent tooling at enterprise scale.

From Experiment to Foundation: MCP’s Remarkable 16-Month Rise

When Anthropic first introduced MCP in late 2024, industry reception was cautiously optimistic. Another protocol, another standard — the tech industry has seen plenty of promising specifications fade quietly into irrelevance. MCP was different. Within weeks of release, developers began building MCP servers for their favorite tools. Within months, the community had published thousands of them. By mid-March 2026, the ecosystem counted over 10,000 active public MCP servers covering databases, cloud providers, CRM systems, developer tools, analytics platforms, and more.

The acceleration was driven by a critical decision: Anthropic kept MCP genuinely open. No licensing fees, no vendor lock-in, no proprietary extensions that favored Claude over competitors. By the time OpenAI, Google DeepMind, Cohere, and Mistral integrated MCP support into their own agent frameworks — making it a default rather than an option — the protocol had achieved something rare in tech: true cross-vendor standardization without a standards body forcing it. It happened organically, because developers voted with their implementations.

Enterprise adoption followed the developer groundswell. Block eliminated 340 custom integration connectors by migrating to MCP. Apollo reduced integration maintenance overhead by 60 percent. Replit rebuilt its entire AI development environment on MCP-compatible infrastructure. Fortune 500 companies that had been cautiously experimenting with AI agents in Q4 2025 moved to production deployments in Q1 2026 — in large part because MCP gave their infrastructure teams a standardized, auditable, secure connection layer they could actually trust at scale.

Why Every Major AI Lab Now Ships MCP by Default

The decision by OpenAI, Google DeepMind, Cohere, and Mistral to ship MCP as a default in their agent frameworks was not altruistic — it was strategic inevitability. The network effects of MCP’s server ecosystem had grown too large to ignore. A framework that shipped without MCP compatibility was a framework that couldn’t connect to the 10,000-plus tools developers had already built MCP servers for. Refusing MCP meant ceding the developer ecosystem to competitors who embraced it.

This dynamic fundamentally changed the competitive landscape of AI infrastructure. Historically, platform vendors — the Microsofts, the Salesforces, the AWS — competed partly by making their connector ecosystems proprietary, locking enterprise customers into their stacks through integration gravity. MCP disrupts that model. When any agent can connect to any tool through a single open protocol, switching costs collapse and the ecosystem win goes to the best AI — not the most entrenched platform. That’s why MCP’s 97 million installs aren’t just a usage metric. They represent a structural shift in how enterprise AI power is distributed.

Claude itself now ships with over 75 built-in connectors powered by MCP. Microsoft Copilot, Cursor, Gemini, and Visual Studio Code all support the protocol natively. Enterprise infrastructure from AWS, Cloudflare, Google Cloud, and Microsoft Azure has been updated to support MCP-native deployment patterns, making it straightforward to run MCP server clusters in production at global scale.

The Linux Foundation Move: Why Open Governance Changes Everything

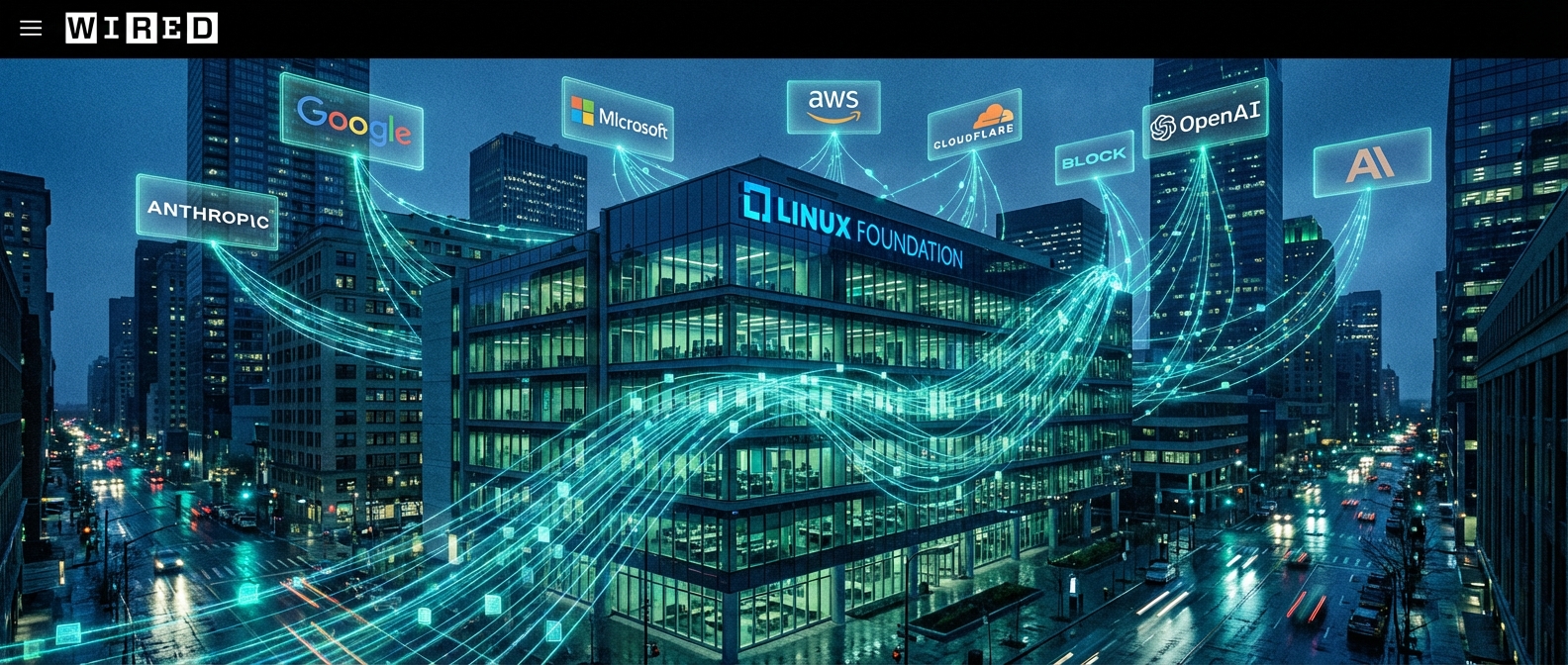

The governance announcement this week may prove to be as significant as the usage milestone itself. Anthropic has donated MCP to the Agentic AI Foundation (AAIF), a directed fund under the Linux Foundation, co-founded by Anthropic, Block, and OpenAI. Supporting organizations include Google, Microsoft, AWS, Cloudflare, and Bloomberg — a coalition that spans AI labs, cloud providers, developer tooling, and financial services.

The Linux Foundation’s track record here matters enormously. It currently stewards the Linux kernel, Kubernetes, Node.js, and hundreds of other foundational open-source projects. Its governance model — transparent roadmapping, vendor-neutral technical steering committees, well-defined contribution processes — is what keeps critical shared infrastructure from becoming captured by any single corporate interest. By moving MCP into this structure, Anthropic is making a credible, verifiable commitment: the protocol will remain open, community-driven, and vendor-neutral regardless of what happens to Anthropic as a company.

This move also signals something about Anthropic’s strategic calculus. Rather than treating MCP as a competitive moat — a way to pull enterprises deeper into Claude — Anthropic is betting that a genuinely open standard grows the overall agentic AI market faster than a proprietary one. It’s the same bet Netscape made when it open-sourced its browser code, or IBM made when it donated key patents to the Linux Foundation. Sometimes the biggest long-term competitive advantage is building an ecosystem so healthy that your competitors can’t afford to leave it.

Real-World Impact: Enterprises Rebuilding on MCP

The ground-level impact of MCP’s adoption is most visible in how enterprise AI workflows have been redesigned over the past year. Before MCP, a typical Fortune 500 AI deployment might involve a custom integration layer that took months to build, was maintained by a dedicated team, and broke every time an upstream API updated. Those deployments were isolated islands — powerful within their scope but unable to share tooling or patterns with other AI workflows in the same organization.

With MCP, that picture has changed. An MCP server built for a company’s internal data warehouse can be shared across every AI agent in the organization — whether it’s a Claude-powered customer support agent, a Gemini-based code review assistant, or a GPT-5.4 workflow automation tool. The investment in building one MCP server multiplies across every AI application in the stack. Teams that previously needed separate integration specialists for each AI project now share infrastructure. The organizational economics of AI deployment have fundamentally shifted.

Security and compliance teams have also found MCP’s standardization valuable in unexpected ways. Because MCP defines explicit, auditable connection patterns, it’s far easier to write security policies around MCP-based connections than around the ad-hoc integrations that preceded them. Enterprise security tools are beginning to ship MCP-native monitoring and policy enforcement — a development that would have been impossible without a common standard to target.

What the 97 Million Number Actually Means

Numbers in tech can mislead. Installs don’t always equal active users; downloads don’t always equal deployments. But MCP’s 97 million figure is SDK downloads across Python and TypeScript — language-level packages that developers install when they’re actively building. Unlike app store downloads that can sit unused on a device, an SDK download reflects an engineering decision to build something on top of the protocol. The figure also includes enterprise deployments at companies like Block and Apollo that represent thousands of active production instances behind a single SDK install count.

To put it in context: npm (Node Package Manager) itself took years to reach the kind of weekly download numbers that MCP is now hitting. The Kubernetes Python client, which is arguably the best comparison for infrastructure SDK adoption, required several years of enterprise cloud adoption to reach comparable scale. MCP reached these figures in a market that didn’t fully exist sixteen months ago — the production agentic AI market. That’s what makes the trajectory remarkable: it’s not riding an existing wave, it’s defining the wave.

Conclusion: The Plumbing Is Now Assumed

There is a moment in the maturation of every transformative technology when it stops being a feature and becomes infrastructure — when it transitions from “interesting capability” to “assumed plumbing.” TCP/IP reached that moment in the late 1990s. HTTP reached it in the early 2000s. Kubernetes reached it sometime around 2020. MCP, based on the evidence of 97 million installs, cross-vendor adoption, and now Linux Foundation governance, appears to be reaching that moment right now, in April 2026.

For developers, that means MCP support is moving from “nice to have” to “table stakes” in any AI agent framework. For enterprises, it means the integration complexity that has slowed AI deployment is finally dissolving into standardized, auditable, vendor-neutral infrastructure. For the broader AI industry, it means the competitive battles of the next decade won’t be fought primarily on who controls the connector ecosystem — they’ll be fought on the quality of the AI itself. Anthropic’s bet in donating MCP is that when the playing field is level, the best model wins. Watch this space closely.

Leave a Reply