OpenAI’s deployment of the full GPT-5.4 model series this month came with a benchmark that stopped the AI research community in its tracks. The “Thinking” variant of GPT-5.4 scored 75.0 percent on OSWorld-Verified, a rigorous benchmark that tests an AI system’s ability to complete real computer-use tasks: navigating file systems, editing spreadsheets, writing and executing code, managing calendars, and operating software the way a human employee would. That score surpasses the average human performance score on the same test.

Before the inevitable “but can it replace my job” anxiety kicks in, some important context is in order. Passing a benchmark is not the same as replacing human workers. OSWorld-Verified tests specific, well-defined desktop tasks in controlled conditions. Real workplaces are messier, involve ambiguous instructions, require social intelligence, and demand accountability in ways that current AI systems handle poorly. The benchmark is a meaningful signal, not an arrival notice for the robot apocalypse.

What Changed Between GPT-5 and GPT-5.4?

The most significant architectural change in GPT-5.4 is the deeper integration of test-time compute — the ability of the model to “think longer” on harder problems before producing an answer. Earlier versions of this capability existed in OpenAI’s “o” series of reasoning models, but GPT-5.4 bakes this capability more seamlessly into a general-purpose assistant, allowing it to scale effort based on task complexity without requiring users to explicitly switch modes.

The result is a model that handles straightforward queries quickly but can allocate significantly more computation to problems that warrant it — debugging complex codebases, drafting nuanced legal documents, working through multi-step scientific analysis. In practice, users report that GPT-5.4 feels qualitatively different on “hard” tasks: more thorough, less prone to confident-sounding errors, and better at identifying when it doesn’t know something.

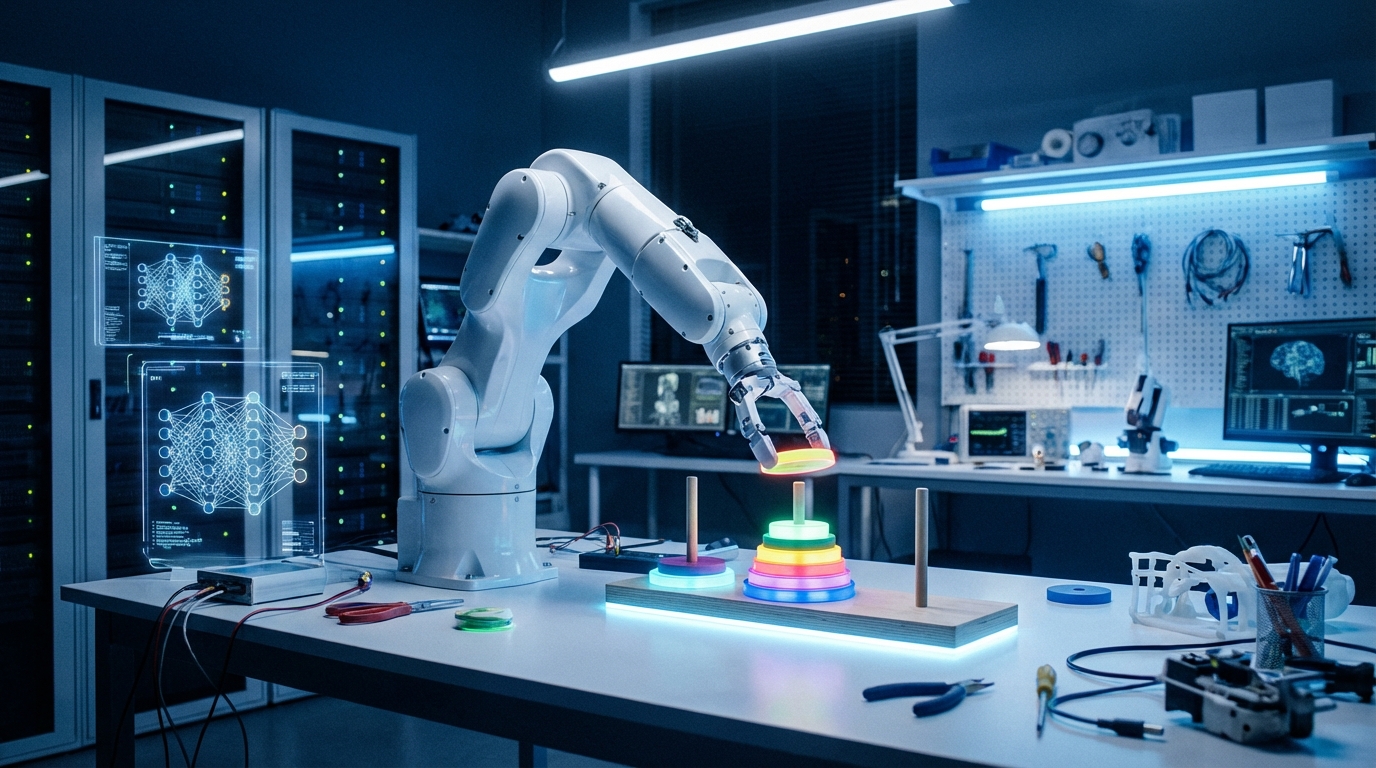

Multimodal capabilities also took a substantial leap. GPT-5.4 processes images, documents, audio clips, and video frames with much tighter integration than previous versions. A user can share a photo of a handwritten whiteboard diagram, a PDF contract, and a voice note, and GPT-5.4 can synthesize all three inputs into a coherent response. This kind of genuinely multimodal reasoning is what makes the computer-use benchmark results meaningful — the model isn’t just reading text, it’s interpreting visual interfaces.

The Competitive Landscape

GPT-5.4’s launch didn’t happen in a vacuum. Anthropic’s Claude Mythos 5, released just weeks earlier, had itself set new records on coding and reasoning benchmarks. Google DeepMind’s Gemini Ultra 3 is reportedly on a tight release schedule. The pace of frontier model improvement is so rapid that benchmark records now have a shelf life measured in weeks rather than months.

For enterprise customers trying to make rational procurement decisions, this pace is simultaneously exciting and exhausting. The model you evaluate today may be significantly outperformed by the time your procurement process concludes. An increasing number of companies are responding by building model-agnostic infrastructure — abstraction layers that allow them to swap underlying AI providers without rewriting their applications.

What Should Everyday Users Take Away?

The practical upshot for non-specialist users is straightforward: the AI tools available in 2026 are dramatically more capable than what existed even 18 months ago. Tasks that previously required significant human expertise — drafting a solid contract, debugging a Python script, analyzing a financial statement, researching a medical question — can now be meaningfully assisted by AI in ways that genuinely save time and improve output quality.

The key word remains “assisted.” The best results still come from humans who understand the domain well enough to evaluate AI outputs critically. GPT-5.4 getting 75 percent on a desktop benchmark means it will reliably complete tasks correctly most of the time — but that remaining margin matters, especially in high-stakes contexts. Use these tools as powerful collaborators, not autonomous replacements, and they’ll serve you extraordinarily well.

Leave a Reply