When Google quietly released Gemma 4 on April 2, 2026, it sent shockwaves through the AI developer community. The new family of open-source models doesn’t just incrementally improve on its predecessors — it fundamentally redefines what “efficient AI” means. With its flagship 31B parameter model ranking as the third most capable open model in the world, and its lightweight variants running on smartphones and Raspberry Pi devices, Google Gemma 4 is setting a new standard for what open-source AI can achieve.

For developers, researchers, and enterprises alike, the arrival of Gemma 4 represents a pivotal moment. The question is no longer whether open-source AI can compete with proprietary giants — it’s how quickly the ecosystem will build on top of what Google has just handed the world for free.

What Is Google Gemma 4 and Why Does It Matter?

Gemma 4 is Google’s fourth generation of open-weight AI models, built using the same underlying research and technology that powers Google’s flagship proprietary Gemini 3 model. Unlike closed commercial models, Gemma 4 releases the model weights publicly, allowing developers to inspect, modify, fine-tune, and deploy these models without restriction — all under an Apache 2.0 license that permits commercial use without royalty or licensing fees.

This matters enormously in today’s AI landscape. As big tech companies increasingly lock their most powerful models behind paywalls and API rate limits, Gemma 4 offers a credible, high-performance alternative that organizations can run entirely on their own infrastructure. From data privacy to cost savings to customization, the implications for the developer community are profound.

Google reports that Gemma models have been downloaded more than 400 million times across all generations, with the community creating over 100,000 derivative variants. Gemma 4 is poised to accelerate that adoption dramatically, particularly among enterprises with data sovereignty requirements and developers building on the edge.

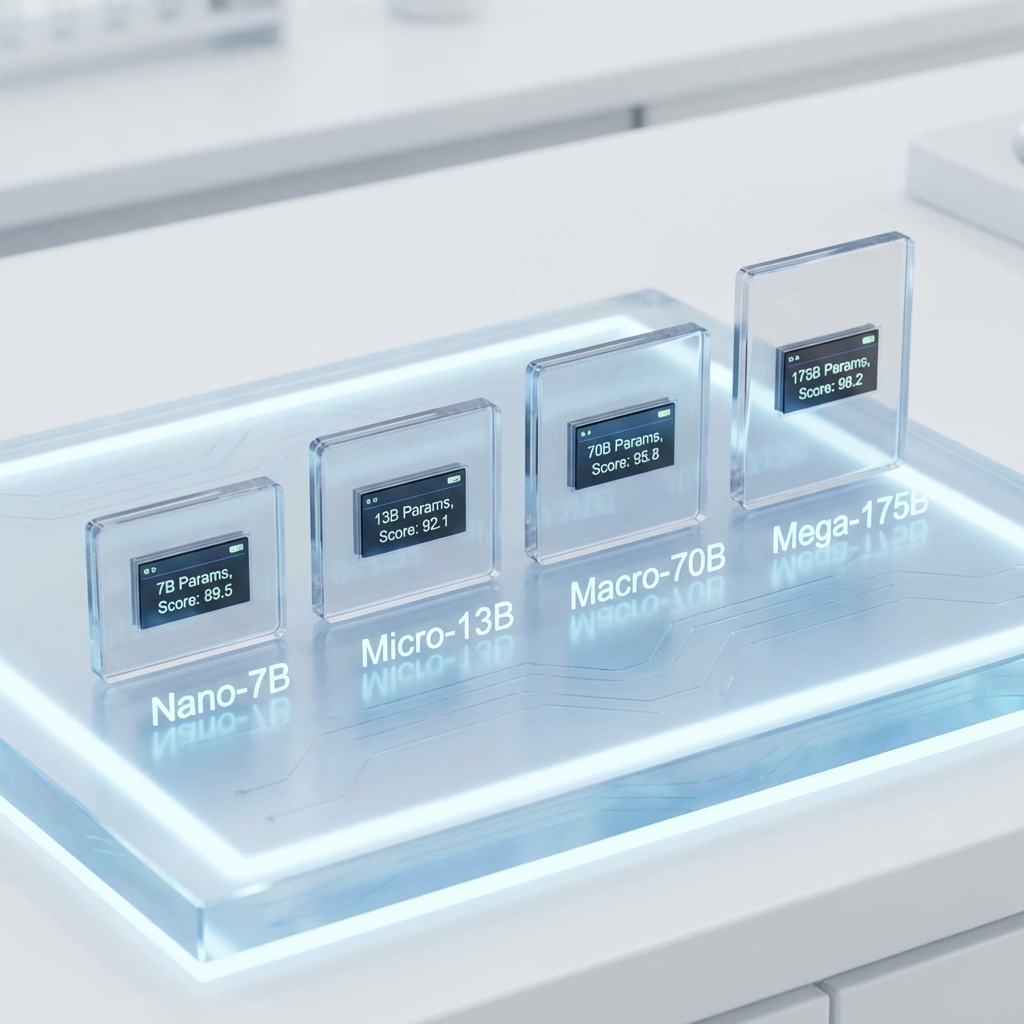

Breaking Down the Gemma 4 Model Family

Gemma 4 isn’t a single model — it’s a carefully architected family of four distinct models, each designed to serve different computational environments and use cases.

At the top of the family sits the 31B Dense Model, currently ranked third on the Arena AI text leaderboard, which is the industry’s most rigorous benchmark for open models. This is a full-scale model suited for servers and enterprise GPU clusters, capable of handling complex reasoning, multi-step analysis, and advanced natural language tasks with near-frontier performance.

Next is the 26B Mixture of Experts (MoE) model, ranked sixth on the same leaderboard. The MoE architecture is particularly interesting because it activates only a subset of its parameters for any given task, making it significantly more efficient than dense models of comparable total parameter count. This means faster inference at lower computational cost — a major advantage for production deployments at scale.

For developers targeting edge deployments, Google introduces the Effective 4B (E4B) model, optimized for consumer-grade GPUs and workstations. And at the smallest end, the Effective 2B (E2B) model is designed specifically for mobile devices — Android smartphones, IoT hardware, and even Raspberry Pi boards — making true on-device AI inference a practical reality for mainstream applications.

All four models share core capabilities including 128K to 256K token context windows, support for 140+ languages, native multimodal processing of images, video, and audio, and built-in agentic workflow support with function calling, structured JSON output, and system instruction handling.

Benchmark Results That Defy the Model’s Size

The headline claim from Google — that Gemma 4 “outcompetes models 20x its size” — sounds like marketing hyperbole until you examine the benchmarks. The 31B Dense Model’s third-place ranking on the Arena AI leaderboard places it ahead of models from major commercial providers that require far more computational resources to run.

On standard reasoning benchmarks including MMLU, HumanEval, and BIG-Bench Hard, Gemma 4’s smaller models consistently outperform equivalently sized models from other providers. The E4B model achieves scores that would have required a 20B+ parameter model just 18 months ago. This efficiency is the direct result of Gemma 4 being trained with techniques derived from Google’s most advanced proprietary research — techniques that compress knowledge and reasoning capability into fewer parameters without sacrificing output quality.

Code generation is another area where Gemma 4 shines. Google highlights high-quality code generation for local-first development as a key capability, and early developer testing confirms that all four models produce clean, well-structured code across major programming languages. For developers building coding assistants or automated code review tools, this opens up powerful offline-first workflows that don’t depend on cloud API availability.

On-Device AI: How Gemma 4 Is Redefining Mobile Intelligence

Perhaps the most transformative aspect of Gemma 4 is what it enables on the devices already in people’s pockets. The E2B model was developed in close collaboration with Google’s Pixel team, Qualcomm, and MediaTek — three organizations with deep expertise in mobile chip optimization. The result is a model that delivers near-zero latency inference directly on consumer smartphones without requiring a network connection.

This isn’t just a technical curiosity. On-device AI unlocks a category of applications that simply cannot exist with cloud-dependent models: privacy-sensitive personal assistants that never send data to a server, offline translation and transcription for users in low-connectivity regions, real-time audio processing and speech recognition embedded directly in mobile apps, and responsive AI features that work even when a user’s cellular connection drops.

The E2B and E4B models also support audio input — a feature Google specifically highlights for speech recognition use cases. Combined with the models’ 140+ language support, this creates a compelling foundation for globally accessible voice-powered applications that run entirely on the device.

For IoT developers, the ability to run a capable language model on hardware as modest as a Raspberry Pi opens entirely new possibilities: intelligent home automation systems that make local decisions without cloud round-trips, industrial monitoring applications with natural language interfaces, and educational tools deployable in resource-constrained environments anywhere in the world.

The Apache 2.0 License: A Game-Changer for Developers

Technical capability alone doesn’t explain why Gemma 4’s release has generated so much excitement. The licensing decision is equally significant. Previous generations of Gemma carried usage restrictions that limited certain commercial applications. Gemma 4 ships under the Apache 2.0 license — one of the most permissive open-source licenses in existence.

Apache 2.0 means developers can use Gemma 4 in commercial products without paying royalties, modify the model weights and fine-tune for specific use cases, redistribute modified versions, and integrate the models into proprietary software without open-sourcing the surrounding application code. This is a major shift that removes the hesitation many enterprises had about adopting Gemma in production environments.

This is a strategic move by Google that has significant implications for the competitive AI landscape. By releasing Gemma 4 under Apache 2.0, Google is effectively commoditizing a layer of AI capability that competitors charge for, while retaining a competitive advantage through its proprietary Gemini models, cloud infrastructure, and enterprise services. The strategy mirrors what Google did with Android: give away the platform to grow the ecosystem, then monetize through services built on top of it.

Gemma 4 vs. The Open-Source Competition

The open-source AI model space has become intensely competitive in 2026, with major contributions from Meta’s Llama series, Mistral AI, Alibaba’s Qwen series, and now Google’s Gemma 4. Each family brings different strengths to the table.

Meta’s Llama models remain dominant in raw community adoption and fine-tuning ecosystem size. Mistral’s models are known for exceptional efficiency and European data compliance. Qwen models lead in Chinese-language performance. Gemma 4’s differentiation is the combination of Google’s proprietary training techniques, strong multimodal capabilities across all model sizes, mobile-first optimization with hardware partnerships, and the credibility of being derived from Gemini 3 — currently one of the world’s most capable AI systems.

For developers choosing between these ecosystems, Gemma 4’s strong performance on the Arena leaderboard, combined with its unusually broad hardware compatibility and Apache 2.0 licensing, makes it a compelling default choice for new projects — particularly those targeting edge deployment or requiring multilingual support.

What Developers Can Build With Gemma 4 Right Now

With Gemma 4 available today via Google’s official channels, Hugging Face, and Kaggle, developers can immediately begin building a wide range of applications. The models are compatible with popular inference frameworks including llama.cpp, Ollama, vLLM, and Hugging Face Transformers, meaning integration into existing workflows is straightforward.

Immediate use cases include local coding assistants integrated directly into IDEs, privacy-first document analysis tools for legal and medical professionals, multilingual customer service chatbots deployable without cloud dependency, on-device personal productivity assistants for mobile apps, and custom fine-tuned models for specialized industry applications in healthcare, finance, and education.

Google has also confirmed that Gemma 4 is fully compatible with its Vertex AI platform for developers who want the convenience of managed cloud deployment while retaining the option to self-host. This hybrid approach — open weights, cloud optional — gives enterprises maximum flexibility in how they architect their AI infrastructure.

Conclusion

Google Gemma 4 represents more than an incremental improvement in open-source AI. It’s a statement about where the industry is heading: toward powerful, efficient models that developers own outright, that run on hardware from data center to smartphone, and that don’t require ongoing licensing fees or cloud subscriptions to remain viable. With its combination of frontier-competitive performance, Apache 2.0 licensing, on-device capabilities, and 140+ language support, Gemma 4 sets a new benchmark for what open AI can be.

Whether you’re a solo developer building a local coding assistant, an enterprise architect designing a privacy-compliant AI pipeline, or a researcher pushing the boundaries of what smaller models can do, Gemma 4 belongs in your toolkit. The 400 million downloads that preceded this release suggest the developer community already agrees — and with Gemma 4, the best is yet to come.

Leave a Reply