Something big is quietly taking shape inside Apple’s AI strategy. According to multiple reports confirmed in early 2026, Apple and Google have struck a landmark licensing deal — reportedly worth up to $1 billion per year — that will bring Google’s Gemini AI models directly into Siri on iOS 27. The project, codenamed Project Campos, represents a dramatic shift in how Apple plans to compete in the AI race. Here’s what it means for you.

What Is Project Campos?

Project Campos is Apple’s internal initiative to integrate third-party AI models into the Siri assistant framework via a new system called AI Extensions. Rather than relying solely on Apple’s own on-device intelligence, iOS 27 will allow Siri to dynamically route complex queries to powerful cloud-based models — starting with Google Gemini.

The deal gives Apple access to Gemini’s full reasoning capabilities, multimodal understanding, and web knowledge without having to build all of it from scratch. For Google, it’s a chance to embed Gemini into the most-used mobile assistant on the planet, with over one billion active iPhone users.

Why Apple Chose Google Gemini

Apple’s own AI models have made impressive strides in on-device efficiency — Apple Intelligence, introduced in iOS 18, was praised for its privacy-first approach and local processing. But when it comes to deep reasoning, real-time web knowledge, and multimodal understanding, Apple’s models lag behind frontier models like Gemini and GPT-5.

Rather than spend years and billions trying to catch up, Apple has taken a pragmatic approach: license the best available models and integrate them seamlessly into the Siri experience. Google Gemini was chosen over OpenAI’s GPT models reportedly because of existing infrastructure ties between the two companies — Google’s search deal with Apple is already worth over $20 billion annually — and because Gemini’s multimodal capabilities aligned well with Apple’s vision for a visual, conversational assistant.

How AI Extensions Will Work on iOS 27

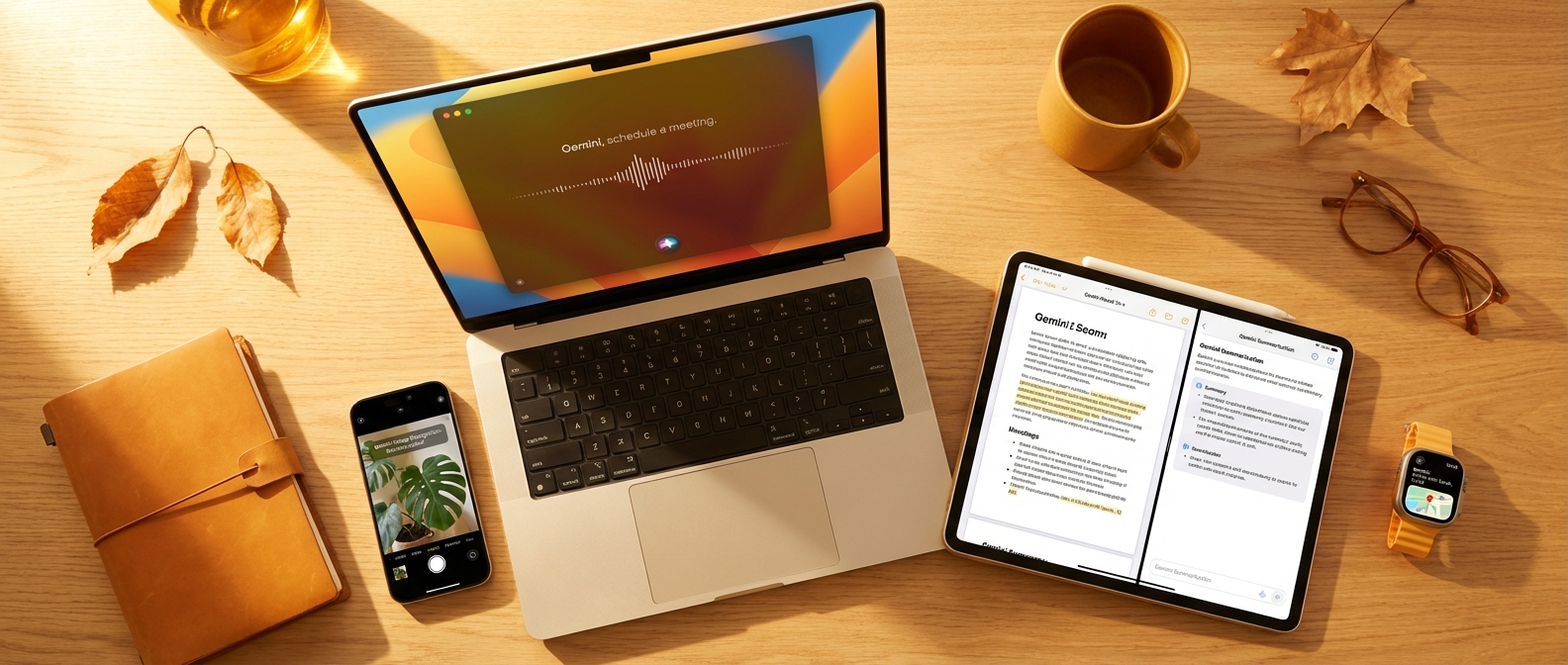

The AI Extensions framework is designed to be modular and user-transparent. When a user asks Siri something that exceeds on-device capabilities — a complex coding question, a long document summary, or a nuanced research query — Siri will automatically escalate the request to the appropriate AI extension.

Users will be notified when a query is being handled by a third-party model. Apple has emphasized that privacy protections remain in place: requests sent to Gemini will be anonymized and will not be stored or used to train Google’s models without explicit user consent. This is a key condition Apple reportedly insisted upon during negotiations.

The framework is also designed to be open to other AI providers over time. Apple has not confirmed which other companies might join the Extensions ecosystem, but industry speculation includes Anthropic and Perplexity as likely future partners.

What This Means for Users

For everyday iPhone users, Project Campos should mean a dramatically smarter Siri — one that can actually answer hard questions well, not just search the web and hand you a list of links. Specifically, users can expect improvements in several areas.

Complex reasoning — Siri will be able to handle multi-step questions, analyze documents you share with it, and give structured, detailed answers to research-type queries rather than deferring to Safari.

Coding and technical help — Developers will be able to ask Siri to help debug code, explain APIs, or write scripts — tasks currently handled far better by ChatGPT or Gemini’s standalone apps.

Real-time knowledge — Gemini’s web grounding capabilities will give Siri access to current information without requiring users to switch to a browser.

Multimodal understanding — Users will be able to share photos, screenshots, or documents with Siri and get intelligent, contextual responses powered by Gemini’s vision models.

The Competitive Implications

Project Campos reshapes the AI assistant landscape in a significant way. For years, the narrative has been that Apple is falling behind OpenAI and Google in the AI race. With this partnership, Apple sidesteps the need to win that race on its own — instead, it becomes the front-end interface for the best AI models in the world.

This is smart positioning. Apple’s strengths have always been hardware integration, user experience, and privacy. By partnering with Google on the backend while maintaining the Apple experience on the front end, it gets the best of both worlds.

For Google, embedding Gemini in Siri is a massive distribution win. It means Gemini will be the default AI brain for over a billion iPhone users — users who may never open the standalone Gemini app. This could significantly shift usage and revenue dynamics in Google’s favor as AI becomes more central to how people interact with their devices.

When Will This Launch?

Project Campos is expected to debut with iOS 27 in fall 2026. Apple typically announces iOS at WWDC in June, so we can expect a public reveal around that time. The AI Extensions framework may launch in beta form for developers earlier, potentially alongside WWDC announcements.

The $1 billion annual deal structure suggests this is not a one-time experiment — Apple is committing to a multi-year AI partnership strategy that will likely evolve as both Gemini and Apple Intelligence mature.

Final Thoughts

Project Campos is a pragmatic, bold move from Apple. Rather than trying to out-engineer Google and OpenAI, Apple is leveraging its platform dominance to bring the best AI capabilities to its users. For consumers, this means a Siri that finally lives up to its potential. For the AI industry, it signals that the battle for AI supremacy will increasingly be won through distribution and integration — not just raw model performance.

Watch for the WWDC 2026 announcement in June for the full picture. Until then, keep following PickGearLab for all the AI news that matters.

Leave a Reply