Amazon Web Services and OpenAI have struck a partnership that neither company would have predicted two years ago. In early 2026, AWS announced that OpenAI’s most powerful models — including GPT-5.4 — are now available natively through Amazon Bedrock, with a new capability called the Stateful Runtime Environment that changes how enterprise teams build and run long-running AI agents. Here’s what this partnership means and why it matters for developers and enterprises building on AI.

The Partnership: What’s Actually Happening

Amazon Bedrock has historically been positioned as AWS’s answer to the AI model marketplace — a managed service that lets enterprises access foundation models from Anthropic, Meta, Mistral, Cohere, and others through a unified API, without having to manage model infrastructure themselves. The notable absence from that lineup was always OpenAI, whose models were exclusively available through OpenAI’s own API or Azure OpenAI Service.

That changes with this partnership. OpenAI models are now available directly in Amazon Bedrock, giving AWS customers access to the full GPT-5 family — including GPT-5.4’s 1-million-token context and native computer use — through the Bedrock API. For enterprises that have built their infrastructure on AWS, this removes a significant friction point: they no longer need to manage separate API credentials, billing relationships, and VPC configurations for OpenAI versus their other AI providers.

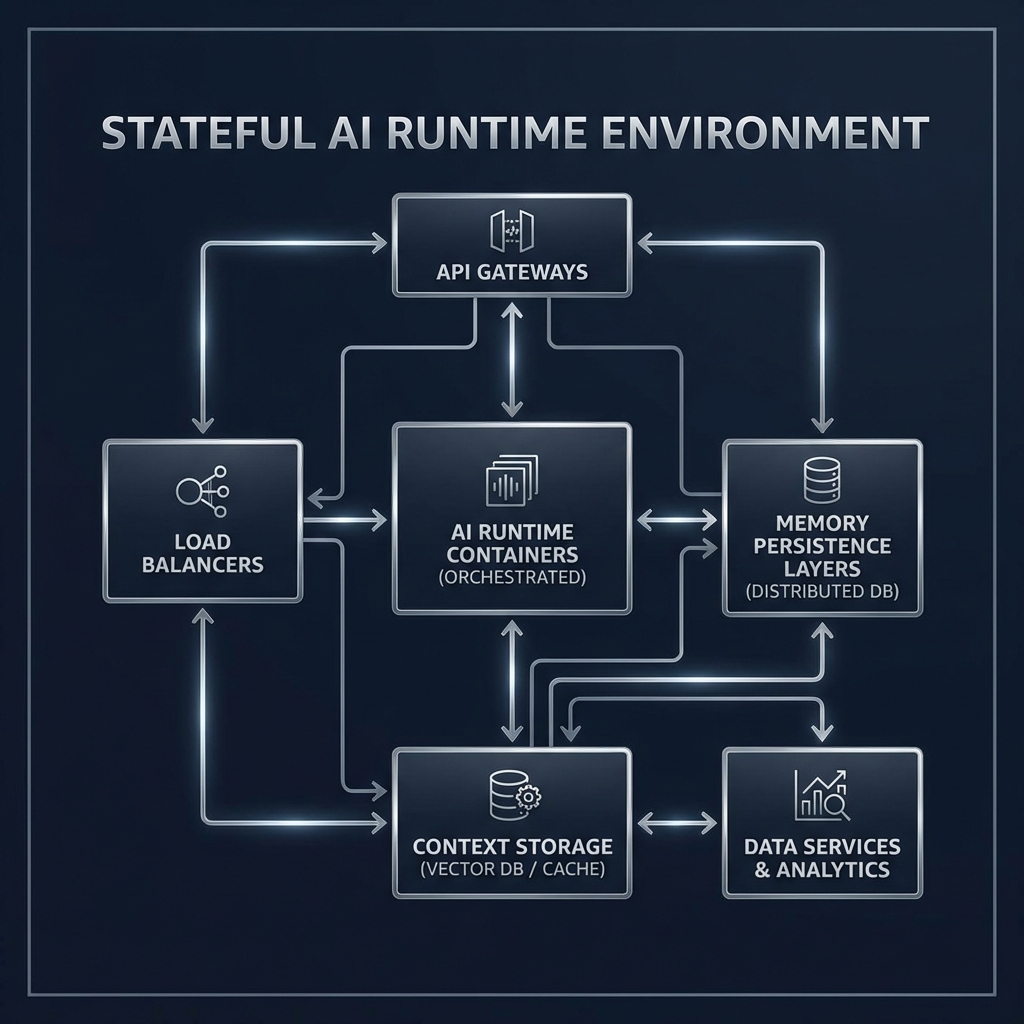

The partnership is built on a Stateful Runtime Environment — a new Bedrock capability that allows AI agents to maintain persistent state across multiple interactions and extended task sequences. This is different from standard API calls, which are stateless by default. Every call to a language model normally starts fresh with no memory of previous interactions unless the caller explicitly passes conversation history in the request.

What the Stateful Runtime Environment Changes

The Stateful Runtime Environment is designed specifically for agentic AI workflows — tasks that unfold over many steps, potentially across hours or days. In a stateful environment, an AI agent can start a complex task, pause it, resume it later, and maintain its full working context throughout. The agent remembers what it has done, what it has learned, and what it still needs to accomplish.

Consider what this enables in practice. A procurement agent could be kicked off on Monday to research vendor options, gather quotes, and draft a comparison report — and it could complete this work asynchronously, across multiple sessions, without losing context between steps. A software development agent could take on a multi-day feature implementation task, writing code, running tests, fixing failures, and updating documentation without requiring constant human supervision.

This is a meaningful advance over current agent frameworks, which typically require keeping the full conversation context in memory at all times — a constraint that limits how long agents can operate and how complex their tasks can be. The Stateful Runtime Environment offloads context management to AWS infrastructure, removing this ceiling.

Why This Is Significant for Enterprise AI

For enterprise teams, the Bedrock + OpenAI integration solves several real problems simultaneously. First, it consolidates billing and access management. Large organizations often prefer to centralize their cloud spend through a single provider for audit, compliance, and cost management reasons. Having OpenAI available through AWS billing simplifies this considerably.

Second, it enables network-level security controls. Enterprises can route OpenAI API calls through their existing AWS VPC configurations, applying the same security policies, logging, and monitoring they use for all other AWS services. This is important for organizations in regulated industries where all outbound data flows need to be tracked and controlled.

Third, the Stateful Runtime Environment integrates with the broader AWS ecosystem — specifically with AWS Lambda, Step Functions, S3, and IAM. This means AI agents built on Bedrock can natively read and write to S3 buckets, trigger Lambda functions, and interact with other AWS services as part of their workflows. The integration depth is significantly greater than what’s possible through OpenAI’s own API.

What This Means for Azure OpenAI Service

Azure OpenAI Service has been Microsoft’s primary competitive advantage in the enterprise AI market — it offered OpenAI models with the security, compliance, and integration story that enterprise buyers demand. The AWS-OpenAI partnership directly challenges that advantage. Enterprise teams that have resisted migrating to Azure primarily for OpenAI model access now have an alternative that keeps them on AWS.

This doesn’t mean Azure OpenAI Service is under existential threat — Microsoft and OpenAI’s partnership runs much deeper than a distribution agreement, and Azure has significant integration advantages across Microsoft’s enterprise software stack. But it does mean that OpenAI model access is no longer a differentiator that drives cloud platform decisions. The models are now available on both major clouds, and the decision comes down to which cloud’s ecosystem, pricing, and existing footprint best fits the organization.

Developer Experience and Availability

OpenAI models in Amazon Bedrock are available through the standard Bedrock API, which means existing Bedrock integrations can add OpenAI models with minimal code changes. The model IDs follow Bedrock’s standard naming convention. Pricing mirrors OpenAI’s standard API pricing with a small Bedrock infrastructure fee on top.

The Stateful Runtime Environment is in preview as of March 2026, available to AWS enterprise accounts. General availability is expected in Q2 2026. Pricing for stateful agent sessions is usage-based, charged per session-hour in addition to model inference costs.

Final Thoughts

The Amazon Bedrock and OpenAI partnership is one of the more consequential enterprise AI infrastructure announcements of 2026. For AWS customers, it delivers OpenAI model access with the security, compliance, and ecosystem integration they need. For the broader AI industry, it signals that the model layer is becoming commoditized — available everywhere — and that competitive advantage will increasingly come from infrastructure, integration, and workflow capabilities rather than exclusive model access.

The Stateful Runtime Environment, in particular, is worth watching closely. If it delivers on its promise, it could meaningfully accelerate how quickly enterprise teams are able to deploy reliable, long-running AI agents in production. Follow PickGearLab as we track the general availability rollout and provide hands-on analysis.

Leave a Reply