Every time you ask an AI model to write an email, generate code, or answer a complex question, data centers around the world quietly consume staggering amounts of electricity. U.S. AI infrastructure alone burned through approximately 415 terawatt-hours in 2024 — more than 10 percent of the country’s entire national energy output. By 2030, that figure is expected to double. Now, a team of researchers at Tufts University believes they may have found a fundamentally better way to build AI — one that slashes energy consumption by up to 100 times while actually making systems smarter, faster, and more capable of genuine reasoning.

The AI Energy Crisis No One Can Ignore

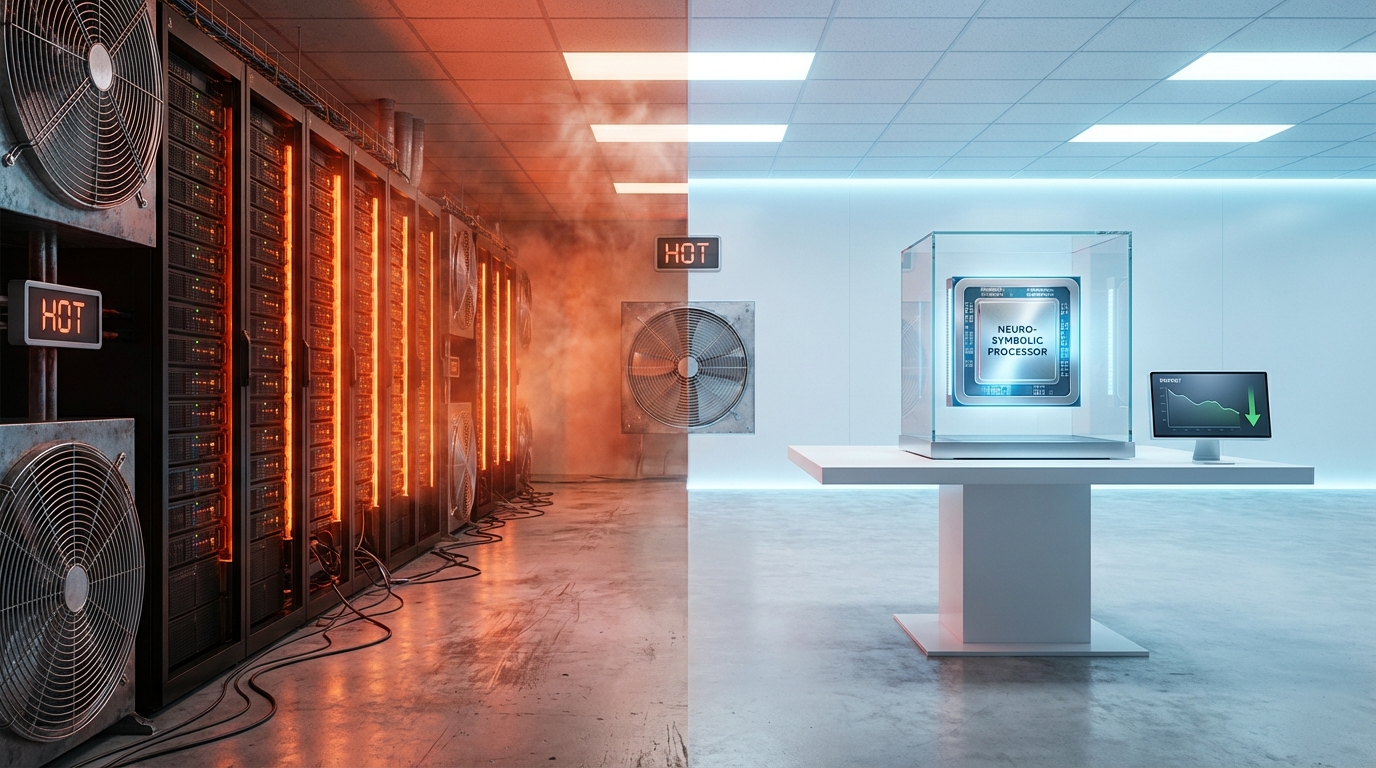

The rapid rise of large language models and autonomous AI agents has come with a hidden price tag: an unprecedented hunger for electrical power. Training a single frontier AI model can consume as much electricity as hundreds of homes use in a year. Running those models at scale — across billions of daily queries — compounds the problem to an industrial degree. Data centers are proliferating across every continent, utility grids are straining under the load, and the carbon footprint of so-called “intelligent” systems has become an urgent concern for policymakers, environmentalists, and technologists alike.

For years, the industry’s default answer was to throw more hardware at the problem — bigger GPUs, faster chip interconnects, more elaborate liquid cooling towers. But a growing cohort of researchers is asking a fundamentally different question: what if the way we build AI is itself inherently inefficient? What if there’s a smarter architecture waiting to be discovered? The work coming out of Tufts University’s Human-Robot Interaction Laboratory suggests the answer is emphatically yes — and the solution is called neuro-symbolic AI.

What Is Neuro-Symbolic AI, and Why Does It Matter?

At its core, neuro-symbolic AI is a hybrid approach that bridges two very different traditions of thinking about machine intelligence. The “neuro” side refers to conventional neural networks — the statistical engines powering today’s large language models and image generators, which learn from massive datasets and excel at recognizing complex patterns. The “symbolic” side draws on classical AI techniques: structured logical rules, formal reasoning, and abstract knowledge representation that mirrors how humans consciously decompose problems into steps.

Rather than relying purely on brute-force pattern matching, a neuro-symbolic system can apply logical constraints that guide its learning process from the start. Instead of trying every conceivable move through thousands of rounds of trial and error, it reasons about which moves are even worth attempting. Think of it as the difference between a chess player who has memorized millions of game positions versus one who genuinely understands strategic principles — both may play well, but the second player arrives at good decisions far more efficiently and handles unfamiliar positions with greater confidence.

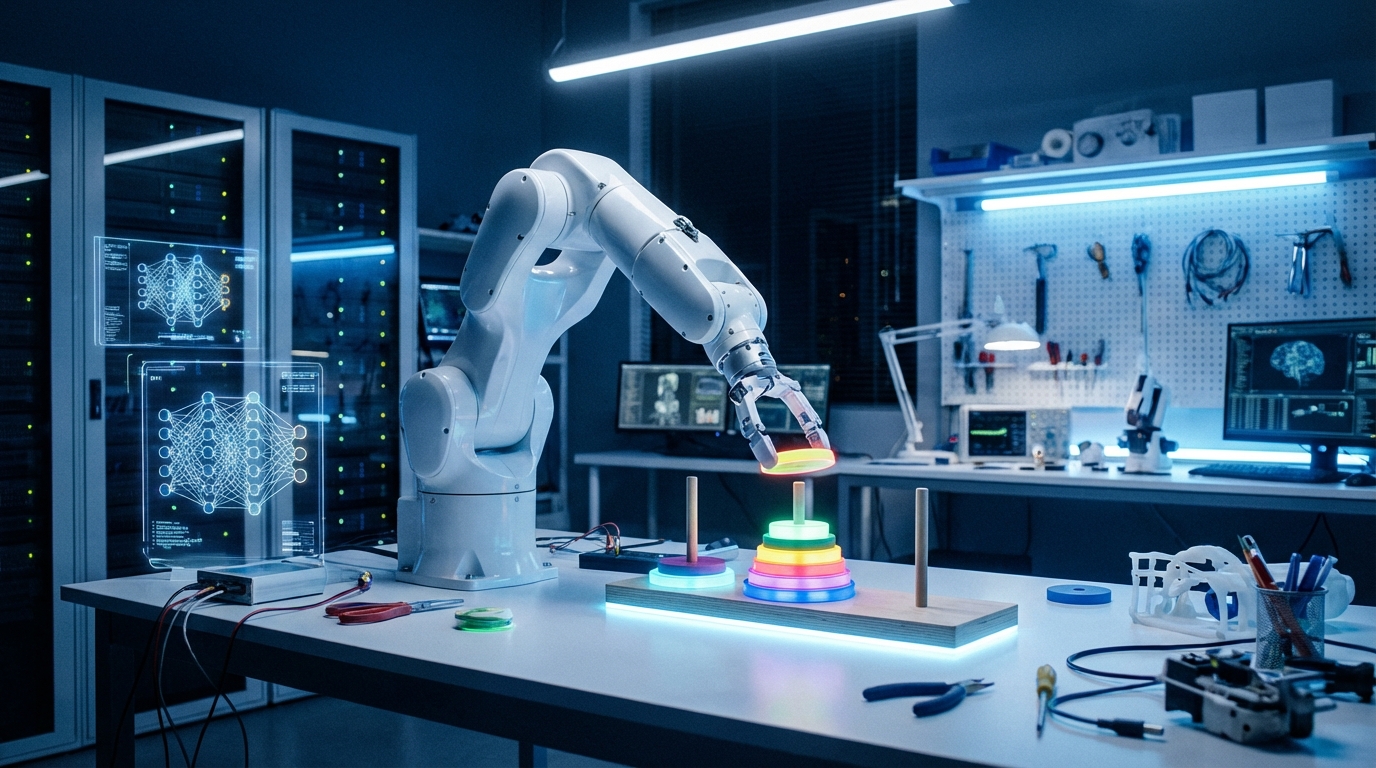

Matthias Scheutz, the Karol Family Applied Technology Professor at Tufts School of Engineering who leads the research, explains the advantage simply: “A neuro-symbolic VLA can apply rules that limit trial and error during learning and reach solutions much faster.” His team’s research applies this principle specifically to visual-language-action (VLA) models — the class of AI architectures that drives modern robotic systems capable of perceiving their environment and taking physical actions in response.

The Results That Stunned the Research World

To benchmark the neuro-symbolic approach against conventional methods, Scheutz and his team selected the Tower of Hanoi — a classic puzzle that requires moving a stack of disks between three pegs according to strict ordering rules. It is a problem beloved by AI researchers precisely because it demands genuine logical planning, not mere statistical pattern recognition. You cannot solve it by luck or brute memorization. The results of the comparison were striking.

The standard VLA model — representing the current state of the art in robotic AI systems — achieved a success rate of just 34 percent on the Tower of Hanoi tasks. The neuro-symbolic VLA achieved 95 percent. When the researchers then introduced novel, unseen puzzle configurations that neither model had encountered during training, the performance gap became even more dramatic: the neuro-symbolic system successfully solved 78 percent of the new challenges. The conventional model solved zero percent — a complete failure to generalize.

But the performance story was almost secondary to the energy revelation. Training the standard model required more than 36 hours of continuous computation, consuming the full baseline energy allocation. Training the neuro-symbolic model took just 34 minutes — using only 1 percent of that energy. During live operation, the gap narrowed but remained staggering: the neuro-symbolic system ran on approximately 5 percent of the power its conventional counterpart required. Across combined training and inference workloads, efficiency gains range from 20x to 100x depending on task complexity.

Why This Approach Works — The Science Behind the Leap

The intuition behind why neuro-symbolic AI is so much more efficient comes down to the fundamental difference between searching randomly and reasoning purposefully. Conventional deep learning models are, at their core, optimization engines. They adjust billions of numerical parameters based on feedback from an enormous volume of trials, gradually improving through a process that is powerful but massively wasteful from an energy perspective. Every failed attempt consumes computation cycles. Every dead-end path carries a real energy cost.

By embedding symbolic rules directly into the learning loop, the neuro-symbolic system dramatically narrows the search space before a single training iteration begins. If a logical rule states “never place a larger disk on top of a smaller disk,” the AI does not need to attempt that combination thousands of times to learn it leads nowhere — it simply never considers it. This constraint-guided learning means the model converges on correct solutions in a fraction of the time and computation that brute-force neural approaches require.

The approach also generalizes with far greater reliability. Because the system has internalized actual logical principles — not just correlations between data points — it can apply those principles to situations it has never encountered. This is precisely why the neuro-symbolic model excelled on novel puzzle configurations while the conventional model collapsed entirely. The difference is not speed. It is the difference between memorization and genuine understanding.

Implications for the Future of AI Infrastructure

If neuro-symbolic techniques can be scaled beyond robotic manipulation benchmarks and applied broadly across AI architectures, the implications are profound. The projected doubling of AI energy consumption by 2030 is not inevitable — it is a consequence of the architectural choices the industry is currently making. A paradigm shift toward hybrid neuro-symbolic systems could allow AI capabilities to keep advancing without a corresponding explosion in power demand, land use for data centers, and carbon emissions.

For enterprises deploying AI at scale, the economic implications are equally significant. A 100x reduction in energy use during training would slash the compute costs associated with developing new models from millions of dollars to a small fraction of current budgets. A 20x reduction during inference would transform the unit economics of serving AI across billions of daily interactions. These are not marginal improvements — they represent an entirely different order of magnitude of operational efficiency.

What Comes Next

The Tufts research is currently focused on robotic manipulation tasks, but the team’s methodology has clear pathways to broader application. Neuro-symbolic approaches are being actively explored across natural language processing, medical diagnosis support, autonomous vehicle decision-making, and scientific discovery workflows — all domains that involve structured knowledge and logical constraints that are natural fits for symbolic reasoning layers added atop neural foundations.

The research will be formally presented at the International Conference of Robotics and Automation in Vienna in 2026, where the team anticipates significant interest from both academic institutions and industry partners. As AI energy costs become an increasingly urgent strategic priority for companies, governments, and grid operators worldwide, solutions that deliver both high performance and radical efficiency will command serious attention and investment.

The neuro-symbolic AI breakthrough from Tufts University represents more than an incremental advancement in robotics benchmarks. It is a signal that the dominant paradigm of ever-larger, ever-hungrier neural networks may have a serious and viable challenger. With a 100x reduction in energy consumption, near-perfect accuracy on benchmark tasks, and the ability to generalize to novel situations where conventional models completely fail, this research arrives at exactly the right moment in AI’s development. The question is whether the broader industry — currently captivated by the scaling arms race — is prepared to embrace the power of reasoning over brute force. If this research is any guide, the smarter bet may be on the smarter architecture.

Leave a Reply