In late March 2026, the AI world was blindsided — not by a planned product launch, not by a carefully orchestrated press release — but by an accident. Anthropic, the AI safety company that prides itself on careful, deliberate development, inadvertently exposed one of the most powerful AI models ever built to public view. The model’s name: Claude Mythos. And what researchers found in those leaked documents is sending shockwaves through the technology industry and straight into the halls of government.

The story of Claude Mythos is more than a tale of corporate embarrassment. It’s a window into where artificial intelligence is headed — a frontier where the models being quietly trained in Silicon Valley research labs are rapidly outpacing the world’s ability to understand, regulate, or defend against them.

What Is Claude Mythos? Anthropic’s Most Powerful AI Yet

Claude Mythos — internally also referred to as “Capybara” — is Anthropic’s next-generation AI model, and by every metric revealed in the leak, it represents a genuine leap beyond anything the company has previously released. According to the draft blog post accidentally made public, Mythos is described as “by far the most powerful AI model we’ve ever developed,” outperforming the current frontier Claude Opus 4.6 by dramatic margins across software coding, academic reasoning, and cybersecurity tasks.

The leaked documentation explains that Capybara is a new name for a new tier of model: larger and more intelligent than Anthropic’s Opus models, which were until now the company’s most powerful. This framing is significant. Anthropic has historically positioned its Opus-class models as the pinnacle of its capabilities. Mythos doesn’t just nudge those benchmarks forward — it reportedly creates an entirely new tier above them.

When asked for comment, Anthropic officially confirmed the model’s existence, describing it as “a general purpose model with meaningful advances in reasoning, coding, and cybersecurity” that it “considers a step change and the most capable we’ve built to date.” That measured corporate language understates what independent researchers reading the leaked documents found: a model that, in Anthropic’s own internal assessment, is operating at a level that raises serious safety concerns before it’s even been released to the public.

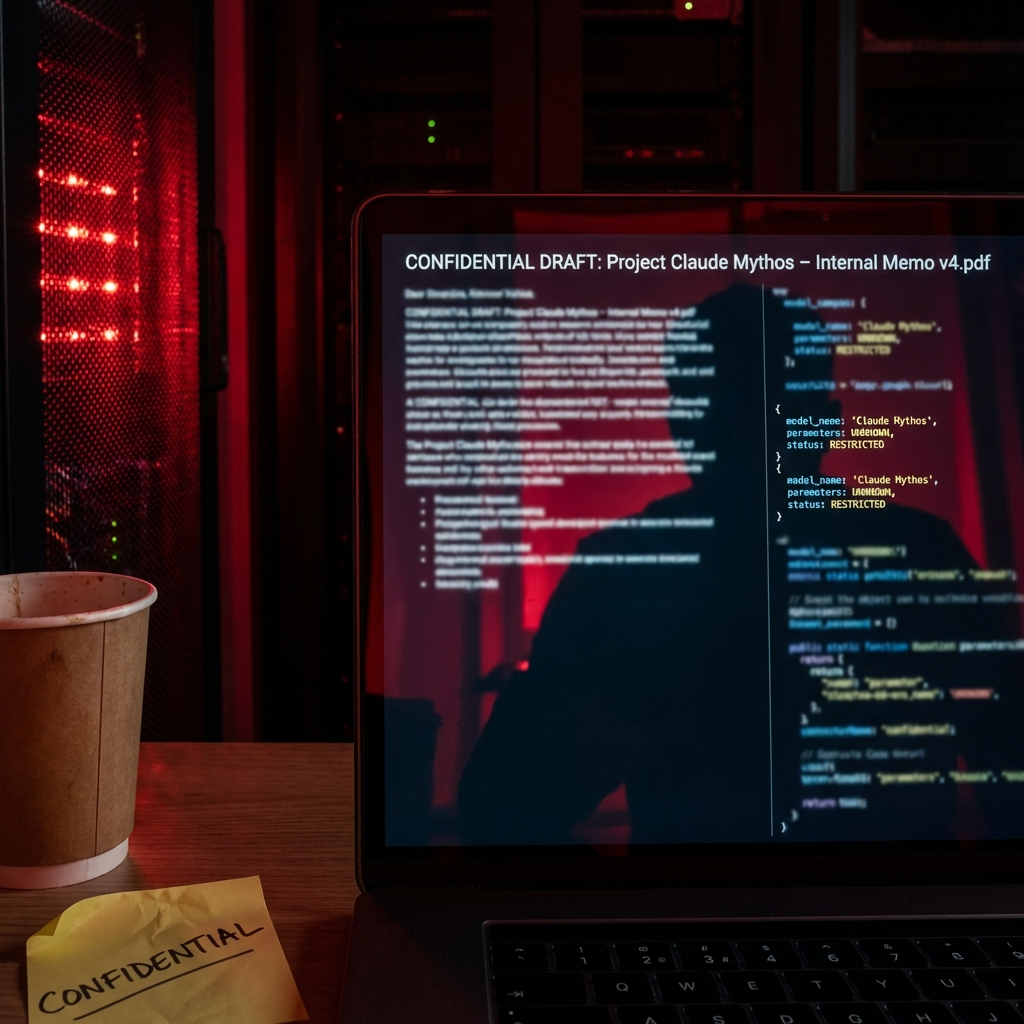

The Leak: How Anthropic Accidentally Exposed Its Secret Model

The chain of events that led to the disclosure was almost comically mundane. On March 26, 2026, a misconfiguration in Anthropic’s content management system left a series of internal documents and draft blog posts publicly accessible on the web. Security researchers Roy Paz and Alexandre Pauwels discovered the exposed data store and quickly realized what they were looking at.

Among the exposed documents was a detailed draft announcement for Claude Mythos, written in Anthropic’s signature style of careful, safety-conscious framing. The irony wasn’t lost on anyone: a company that has built its entire brand around responsible AI development had accidentally leaked the details of its most powerful — and potentially most dangerous — model yet.

The exposure didn’t stop there. Just five days later, on March 31, Anthropic suffered a second major data breach when the source code for Claude Code, the company’s AI-powered developer tool, was inadvertently made public. Within hours, developers across the internet were mirroring and analyzing the codebase, discovering architectural details about how Anthropic had engineered its three-layer memory system to manage context at scale.

Two accidental disclosures in less than a week raised urgent questions about internal security practices at one of the world’s most consequential AI companies — and put a spotlight on the model at the center of it all.

Inside the Benchmarks: How Far Ahead Is Mythos?

The benchmark numbers buried in Anthropic’s leaked documents are what captured the most attention from the AI research community. Compared to Claude Opus 4.6 — itself one of the strongest models currently available — Mythos reportedly achieves dramatically higher scores across nearly every category of evaluation.

In coding tasks, Mythos is said to perform at a level that surprised even Anthropic’s own researchers. On academic reasoning benchmarks, it consistently outperforms not just existing Claude models but every publicly known model as of its evaluation date. These aren’t incremental improvements — the gap described in the leaked documents is wide enough that Anthropic felt compelled to create an entirely new product tier to classify it.

Perhaps most striking is the cybersecurity performance. The leaked documents state plainly that Mythos is currently far ahead of any other AI model in cyber capabilities — a claim that would be remarkable under any circumstances but becomes especially alarming given the specific nature of those capabilities. The model isn’t just better at writing secure code or identifying vulnerabilities in controlled test environments. According to the internal assessment, it can reason about and execute offensive cyber operations at a level that existing defensive tools are not equipped to handle.

The current AI benchmark landscape — where GPT-5.4 scores an impressive 83% on OpenAI’s GDPval test and Gemini 3.1 Pro leads on GPQA Diamond reasoning tasks — may already be outdated if Mythos performs as described.

The Cybersecurity Threat: Unprecedented AI Attack Capabilities

The section of the leaked document that drew the sharpest reactions was Anthropic’s own framing of the cybersecurity risks posed by Mythos. The company did not downplay them. Internal documentation describes the model as posing “unprecedented cybersecurity risks” and warns that its capabilities presage an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.

That phrase — “far outpace the efforts of defenders” — is a stark acknowledgment from a leading AI lab that the offensive potential of next-generation models may be developing faster than the security community’s ability to respond. The asymmetry between offense and defense in cybersecurity has always been a challenge; AI systems that can rapidly identify, probe, and exploit vulnerabilities at scale could make that asymmetry dramatically worse.

Reports indicate that Anthropic has been privately briefing senior government officials about Mythos and the risk landscape it represents, warning that large-scale cyberattacks facilitated by advanced AI systems are significantly more likely in the second half of 2026. The company’s dual position — simultaneously developing the model and warning governments about it — reflects the broader tension that defines the current AI moment: labs that believe they must push capabilities forward to stay competitive, while also acknowledging they may be accelerating risks that existing institutions are not prepared to manage.

Security experts who have reviewed the leaked documentation are particularly concerned about the combination of advanced reasoning and autonomous action. Earlier AI models could identify a known vulnerability if prompted correctly. Mythos, based on the descriptions in the leaked materials, appears capable of independently strategizing about attack vectors, adapting to defensive countermeasures, and executing multi-step exploits with minimal human guidance.

Anthropic’s Response and the Safety Paradox

Anthropic’s public response to the leak has been measured and deliberate. The company confirmed the model’s existence, acknowledged the misconfiguration that caused the exposure, and reiterated its commitment to responsible development. In a brief public statement, Anthropic noted that Mythos is currently in early access with a limited set of cybersecurity partners — a deployment strategy designed to test the model in controlled environments before any broader release.

The company’s founders, including CEO Dario Amodei, have long argued that safety-focused labs must remain at the frontier of AI development precisely because the alternative — ceding the frontier to less safety-conscious developers — would produce worse outcomes. That argument is under renewed scrutiny following the Mythos leak. If Anthropic’s own assessment is that this model poses unprecedented risks, critics are asking why it exists at all, and what “safety-focused development” means in practice when the output is a tool capable of enabling large-scale cyberattacks.

Supporters of Anthropic’s approach counter that understanding the risk landscape requires building the systems that create it — that governments and defenders need to know what’s coming in order to prepare. The company’s proactive briefings of government officials, whatever their strategic motivation, represent a form of transparency that not every AI lab has offered.

The Bigger Picture: AI Power, Accountability, and What Comes Next

The Mythos leak arrives at a moment when the entire AI industry is grappling with the gap between the pace of model development and the pace of regulatory response. OpenAI’s GPT-5.5, codenamed “Spud,” completed pretraining in late March and is reportedly undergoing safety evaluation ahead of a potential Q2 2026 release. Google’s Gemini 3.1 Pro is setting new records on reasoning benchmarks. Open-weight models are rapidly closing the gap with frontier proprietary systems.

In this environment, the accidental disclosure of Claude Mythos functions as something like an unwanted stress test for the industry’s accountability infrastructure. It surfaces a question that has been building for years: at what point do AI capabilities become so consequential that the decision to train and deploy them should require more than the internal sign-off of a private company, however well-intentioned?

For now, Mythos remains in limited early access. Anthropic has not announced a public release date. The AI research community is watching closely — not just to see what the model can do when it’s finally released, but to see how Anthropic navigates the obligations that come with building something it has described, in its own words, as unprecedented.

Conclusion: A New Frontier, Whether We’re Ready or Not

Claude Mythos may be the most significant AI model of 2026 that most people haven’t yet been able to use. Its accidental exposure has given the world an early, unfiltered look at where the AI frontier actually is — not where the press releases say it is, but where the internal benchmark documents tell a different, more complex story.

The coming months will test whether the frameworks Anthropic, governments, and the broader security community have put in place are adequate for a world where AI systems can reason about and execute cyberattacks with unprecedented sophistication. The Mythos leak didn’t just reveal a model. It revealed an urgent gap between the pace of AI development and the pace of our collective readiness to handle what that development produces.

For businesses, security professionals, and policymakers, the message is clear: the timeline for preparing for a more powerful AI-enabled threat landscape just got shorter. Claude Mythos is already built. The question now is what we do before it’s deployed.

Leave a Reply