NVIDIA Nemotron 3 Super has arrived as one of the most ambitious open-weight AI models ever released. Unveiled at GTC 2026 on March 11, this 120-billion-parameter hybrid model activates only 12 billion parameters per token, delivering up to 5x higher throughput than its predecessor while pushing the boundaries of what open agentic AI can accomplish.

For developers building multi-agent systems, autonomous coding pipelines, or enterprise cybersecurity tools, Nemotron 3 Super represents a significant leap forward. Here is everything you need to know about its architecture, performance, and how to start using it today.

What Is NVIDIA Nemotron 3 Super?

Nemotron 3 Super is an open-weight large language model developed by NVIDIA Research. It belongs to the broader Nemotron 3 family announced at GTC 2026, which also includes smaller variants like Nemotron 3 Nano. However, the Super variant stands apart as the flagship model designed for complex agentic reasoning tasks.

The model packs 120 billion total parameters but activates only 12 billion per inference step. This sparse activation strategy, powered by a Mixture of Experts architecture, means developers get frontier-class intelligence without the compute costs typically associated with models of this scale. NVIDIA released the model with open weights, full training datasets exceeding 10 trillion tokens, and complete development recipes on Hugging Face.

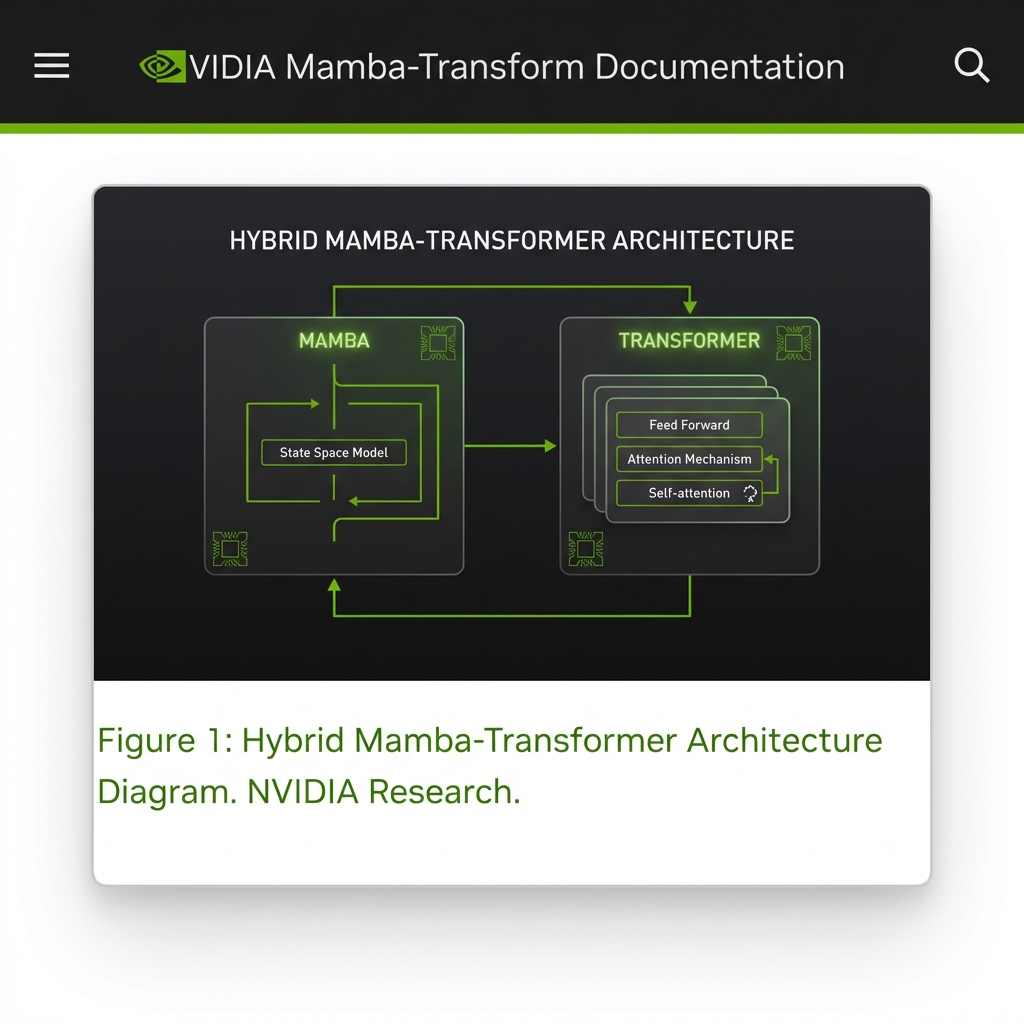

Hybrid Mamba-Transformer Architecture Explained

At the core of NVIDIA Nemotron 3 Super lies a hybrid Mamba-Transformer backbone. This is not a standard transformer model. Instead, NVIDIA combined Mamba layers for efficient long-sequence processing with Transformer layers for precision attention-based reasoning. The result is a model that handles extremely long contexts without the quadratic memory scaling that plagues pure transformer architectures.

The architecture uses what NVIDIA calls Latent Mixture of Experts, or LatentMoE. In this approach, tokens are projected into a smaller latent dimension before being routed to specialized expert networks. This reduces computation per token while maintaining high accuracy. In addition, the model incorporates Multi-Token Prediction, which allows it to generate multiple output tokens in a single forward pass.

On SPEED-Bench, Nemotron 3 Super achieves an average acceptance length of 3.45 tokens per verification step, compared to 2.70 for DeepSeek-R1. This means real-world inference speeds can reach up to 3x wall-clock speedups without requiring a separate draft model. For developers running inference at scale, this translates directly into lower costs and faster response times.

Performance Benchmarks That Stand Out

NVIDIA Nemotron 3 Super delivers impressive results across multiple evaluation suites. On PinchBench, a benchmark that measures how well language models perform as the central reasoning engine of an autonomous agent, the model scores 85.6 percent across the full test suite. This makes it the best-performing open model in its weight class for agentic tasks.

Throughput numbers are equally striking. In an 8,000 token input and 16,000 token output configuration, Nemotron 3 Super achieves up to 2.2x higher inference throughput than comparable 120B open models and up to 7.5x higher throughput than Qwen3.5-122B. These gains come from a combination of the sparse MoE architecture and native NVFP4 quantization optimized for NVIDIA Blackwell GPUs.

The model also supports a native 1-million-token context window. On the RULER benchmark at 1M context length, Nemotron 3 Super outperforms both competing 120B-class models, demonstrating that its long-context capabilities are not just theoretical but hold up under rigorous evaluation. As a result, agents built on this model can retain full workflow state in memory without losing track of earlier instructions or context.

Built for Agentic AI Workflows

NVIDIA designed Nemotron 3 Super specifically for multi-agent systems. The model powers what NVIDIA calls the NemoClaw agent stack, a framework for building autonomous AI agents that can reason over complex tasks, use tools, and coordinate with other agents in real time.

Two primary use cases stand out. In software development, Nemotron 3 Super can serve as the reasoning backbone for coding agents that autonomously write, test, debug, and deploy code across large codebases. The 1-million-token context window means these agents can hold entire repositories in memory, reducing the fragmented reasoning that plagued earlier code-generation models.

In cybersecurity triaging, the model enables agents to analyze threat intelligence feeds, correlate alerts across multiple security tools, and recommend response actions. The combination of fast inference throughput and deep reasoning capability allows security operations centers to process alerts significantly faster than traditional rule-based systems. For example, NVIDIA demonstrated an autonomous security agent at GTC that could triage and prioritize 500 alerts per minute while maintaining contextual awareness across the entire incident timeline.

Open Weights, Open Data, Open Recipes

One of the most significant aspects of Nemotron 3 Super is its openness. NVIDIA released the model under a permissive open model license, giving developers the freedom to customize, fine-tune, and deploy it on their own infrastructure without restrictive usage limitations.

The release goes beyond just model weights. NVIDIA published over 10 trillion tokens of pre-training and post-training datasets, along with 15 reinforcement learning training environments and complete evaluation recipes. This level of transparency is rare among frontier-class models and gives the research community the tools to reproduce, verify, and build upon NVIDIA’s work.

The model also features native NVFP4 pretraining optimized for NVIDIA Blackwell hardware. On the B200 GPU, this delivers 4x faster inference compared to FP8 on the H100, while maintaining accuracy. This means organizations that have already invested in NVIDIA’s latest hardware can extract maximum value from the model immediately.

How to Access NVIDIA Nemotron 3 Super

Getting started with Nemotron 3 Super is straightforward. The model weights are available on Hugging Face under the identifier nvidia/nemotron-3-super-120b-a12b. Developers can also access the model through NVIDIA NIM, which provides optimized inference containers for deployment on NVIDIA GPUs.

For those who want to experiment without local hardware, several API providers now offer Nemotron 3 Super endpoints. OpenRouter lists the model with free-tier access for testing, and NVIDIA’s own build.nvidia.com platform provides interactive model cards with live inference capabilities. Enterprise customers can deploy the model through NVIDIA AI Enterprise for production-grade support and optimization.

The complete training recipes and datasets are hosted alongside the model weights, so teams that want to fine-tune Nemotron 3 Super for domain-specific tasks can follow NVIDIA’s documented methodology step by step.

Final Thoughts: Why Nemotron 3 Super Matters

NVIDIA Nemotron 3 Super is not just another large language model. It represents a strategic bet by NVIDIA that the future of AI lies in open, efficient, and agent-ready models that can run on enterprise hardware without proprietary lock-in. The combination of a hybrid Mamba-Transformer architecture, sparse expert activation, and a 1-million-token context window creates a model uniquely suited for the autonomous AI workflows that enterprises are racing to deploy.

For developers and organizations evaluating their next foundation model, Nemotron 3 Super deserves serious consideration. Its benchmark results, open licensing, and hardware-optimized inference stack make it one of the most compelling options in the current landscape. As agentic AI continues to evolve from research concept to production reality, models like Nemotron 3 Super will play a central role in shaping what autonomous AI systems can achieve.

Stay tuned to PickGearLab for the latest AI model reviews, benchmark breakdowns, and hands-on guides to help you navigate the rapidly changing world of artificial intelligence.

Leave a Reply