Alibaba just made a significant move in the open-source AI race. Qwen 3.5 Small, the latest release from Alibaba’s Qwen team, is a family of highly capable, openly licensed models ranging from 0.8B to 9B parameters — and the benchmarks are turning heads. Under the Apache 2.0 license, these models can be used commercially with no restrictions, making them immediately interesting for any organization that wants capable AI without per-token API costs or vendor lock-in.

Here’s a full breakdown of what Qwen 3.5 Small is, what it can do, and why the open-source AI community is paying close attention.

What Is Qwen 3.5 Small?

Qwen 3.5 Small is a family of compact but powerful language models released by Alibaba’s AI research division. The “Small” designation refers to the parameter count — the family spans 0.8B, 1.8B, 3B, and 9B parameter sizes — which puts these models in the “efficient” category compared to frontier models like GPT-5 or Gemini 3, which run in the hundreds of billions of parameters.

But small parameters don’t mean small capability. Qwen 3.5 Small models are multimodal — they can process both text and images — and they’ve been trained on a high-quality multilingual dataset with particular strength in Chinese, English, and other major languages. The 9B model in particular punches well above its weight class on standard benchmarks, competing with models two to three times its size from other providers.

The Apache 2.0 license is the headline feature from a business perspective. Unlike many open-weight models that come with use restrictions — prohibitions on commercial use, limitations on deployment scale, or requirements to share derivative works — Apache 2.0 imposes essentially no restrictions. You can use Qwen 3.5 Small in a commercial product, fine-tune it, modify it, and redistribute it without owing Alibaba anything.

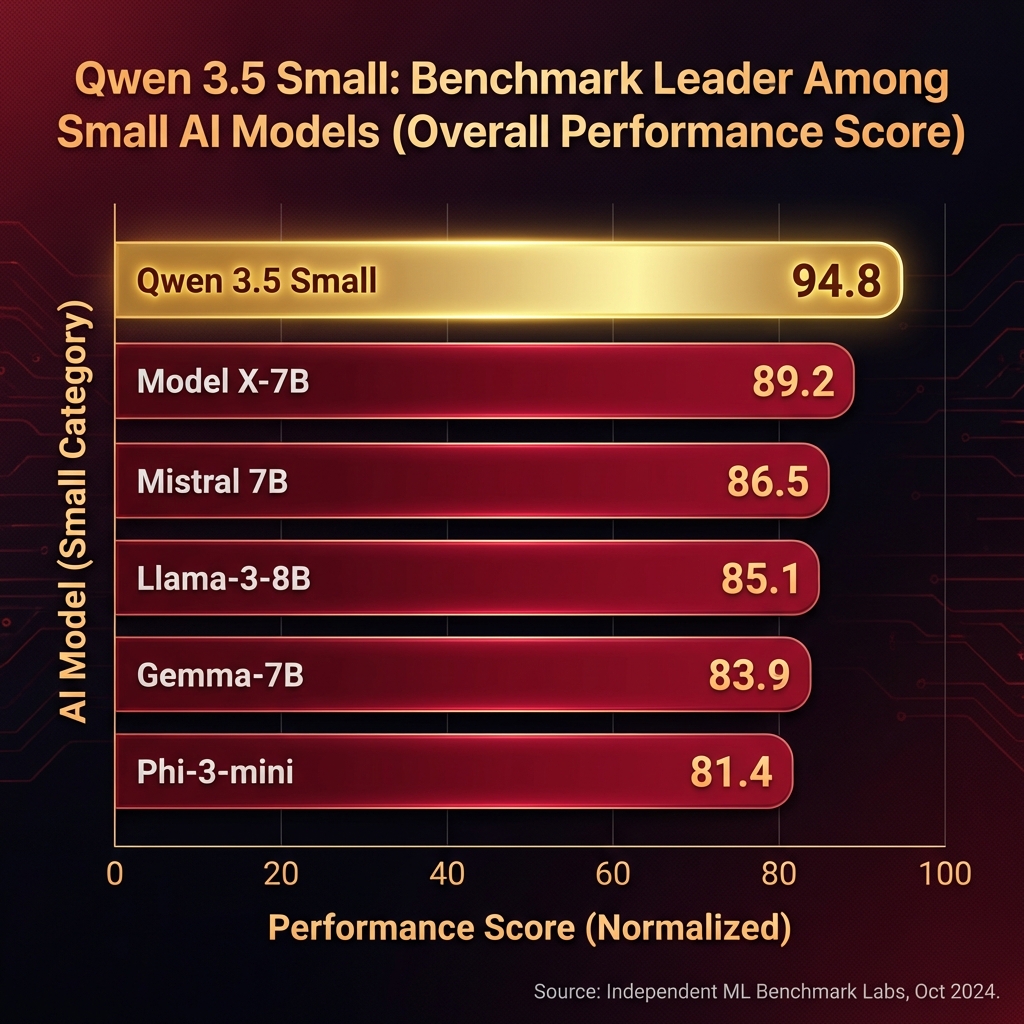

Benchmark Performance

Alibaba has released benchmark results showing Qwen 3.5 Small’s performance across standard evaluations. On MMLU — a comprehensive academic knowledge benchmark — the 9B model scores 74.2%, outperforming Meta’s Llama 3.1 8B (68.9%) and matching Mistral 7B v0.3 on several subtasks. On HumanEval coding tasks, the 9B model achieves 62.4% pass@1, which is competitive with models significantly larger than itself.

The 3B model is particularly interesting for edge and on-device deployment. It scores 67.1% on MMLU — strong for its size — and runs efficiently on consumer hardware. A Macbook Pro with 16GB of RAM can run the 3B model at useful inference speeds, opening up possibilities for fully local, private AI deployment without any cloud dependency.

Multilingual performance is another standout. Qwen 3.5 Small was trained with particular attention to non-English languages, and benchmarks show strong performance in Chinese, Japanese, Korean, Arabic, and major European languages — significantly better than equivalently sized English-centric models from Western providers.

Multimodal Capabilities

The full Qwen 3.5 Small family includes multimodal variants — dubbed Qwen3.5-VL — that can process images alongside text. The vision-language models can answer questions about images, analyze charts and diagrams, describe visual content, and perform document understanding tasks on photos of PDFs, forms, and handwritten text.

For the 9B vision model, Alibaba reports strong performance on visual understanding benchmarks, with the model correctly interpreting complex technical diagrams and multilingual document scans. This capability at the 9B scale is notable — most competitive vision-language models at this size struggle with complex visual reasoning tasks.

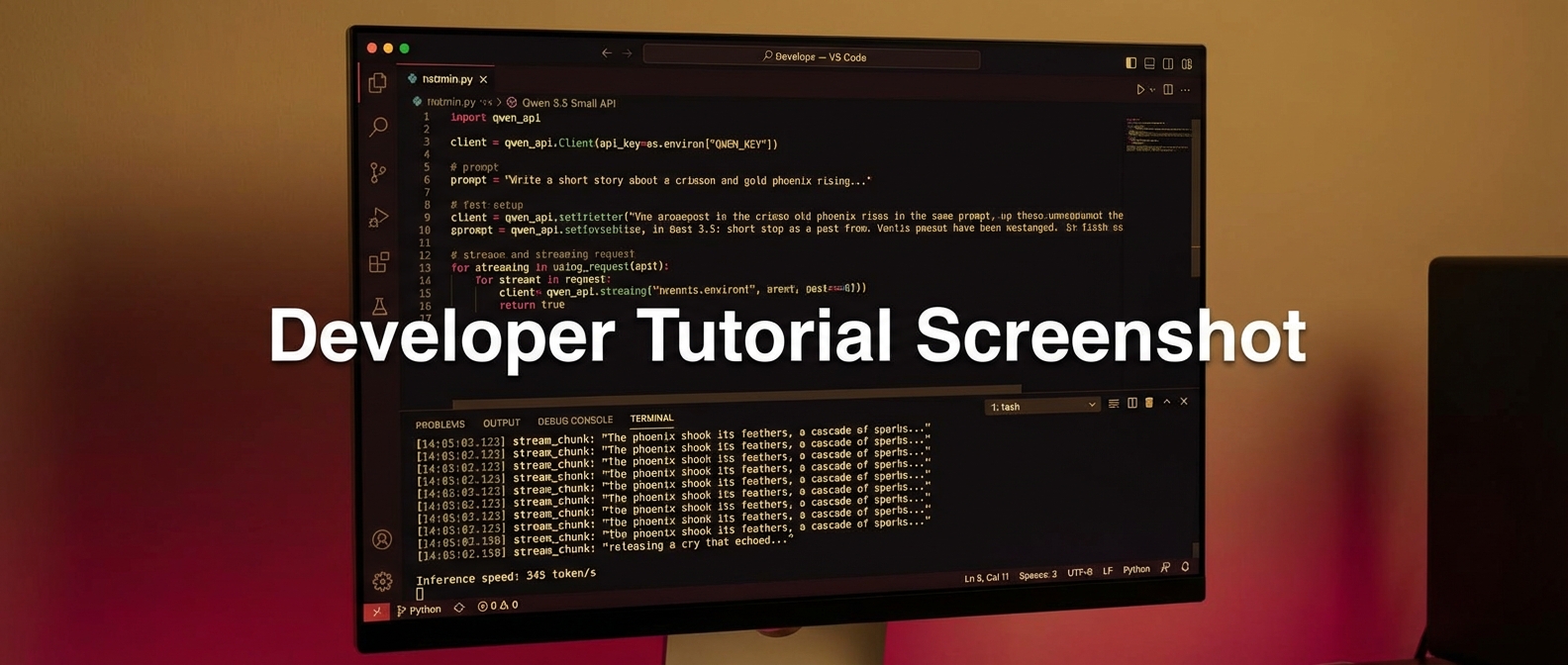

How to Use Qwen 3.5 Small

Qwen 3.5 Small models are available on Hugging Face in standard transformer format, compatible with the transformers library and tools like Ollama, LM Studio, llama.cpp, and vLLM. You can download and run them locally, or deploy them on any GPU cloud provider.

For teams that want managed inference without self-hosting, Alibaba offers Qwen 3.5 Small through their DashScope API — pay-per-token pricing that’s significantly cheaper than GPT or Claude API equivalents, given the smaller model size. Third-party providers including Together AI, Replicate, and Groq have also added Qwen 3.5 Small to their model libraries.

Fine-tuning is straightforward thanks to the Apache 2.0 license. Tools like Unsloth and LlamaFactory support Qwen 3.5 Small for efficient fine-tuning on consumer hardware, meaning teams can create custom domain-specific versions without significant infrastructure investment.

Who Should Use Qwen 3.5 Small?

Startups and indie developers building AI-powered products who want to avoid per-token API costs will find Qwen 3.5 Small compelling. The 3B and 7B models can run on affordable GPU instances or even consumer hardware, enabling AI features at near-zero marginal cost per query.

Enterprise teams with data privacy requirements can deploy Qwen 3.5 Small entirely on-premise, keeping all data within their own infrastructure. The Apache 2.0 license means no legal complications for commercial deployments, and the model weights can be stored in your own secure environment.

Research teams and academics can use Qwen 3.5 Small as a base for experimentation, fine-tuning, and novel architecture research without the restrictions that come with some other open-weight models.

Multilingual applications — particularly those serving Asian markets — will benefit from Qwen 3.5 Small’s strong performance in Chinese, Japanese, and Korean, which significantly outpaces equivalently sized Western-centric models.

Final Thoughts

Qwen 3.5 Small is a meaningful contribution to the open-source AI ecosystem. The combination of competitive benchmark performance, Apache 2.0 licensing, multimodal capabilities, and strong multilingual support makes this a genuinely useful family of models for a wide range of use cases.

The 9B model is the one to watch for teams that need solid general-purpose capability with full deployment flexibility. The 3B model is the one to watch for edge and on-device deployment scenarios where resource constraints matter. Both are available today on Hugging Face — follow PickGearLab as we publish hands-on testing and fine-tuning guides for the Qwen 3.5 Small family.

Leave a Reply